A veteran NPR host says Google stole his voice for NotebookLM. Whether he wins or loses, the case exposes a privacy gap that affects us all.

Meta Description: NPR host David Greene is suing Google for voice cloning. Learn how AI voice theft works, what laws protect you (AB 2602, AB 1836), and how to safeguard your voice data.

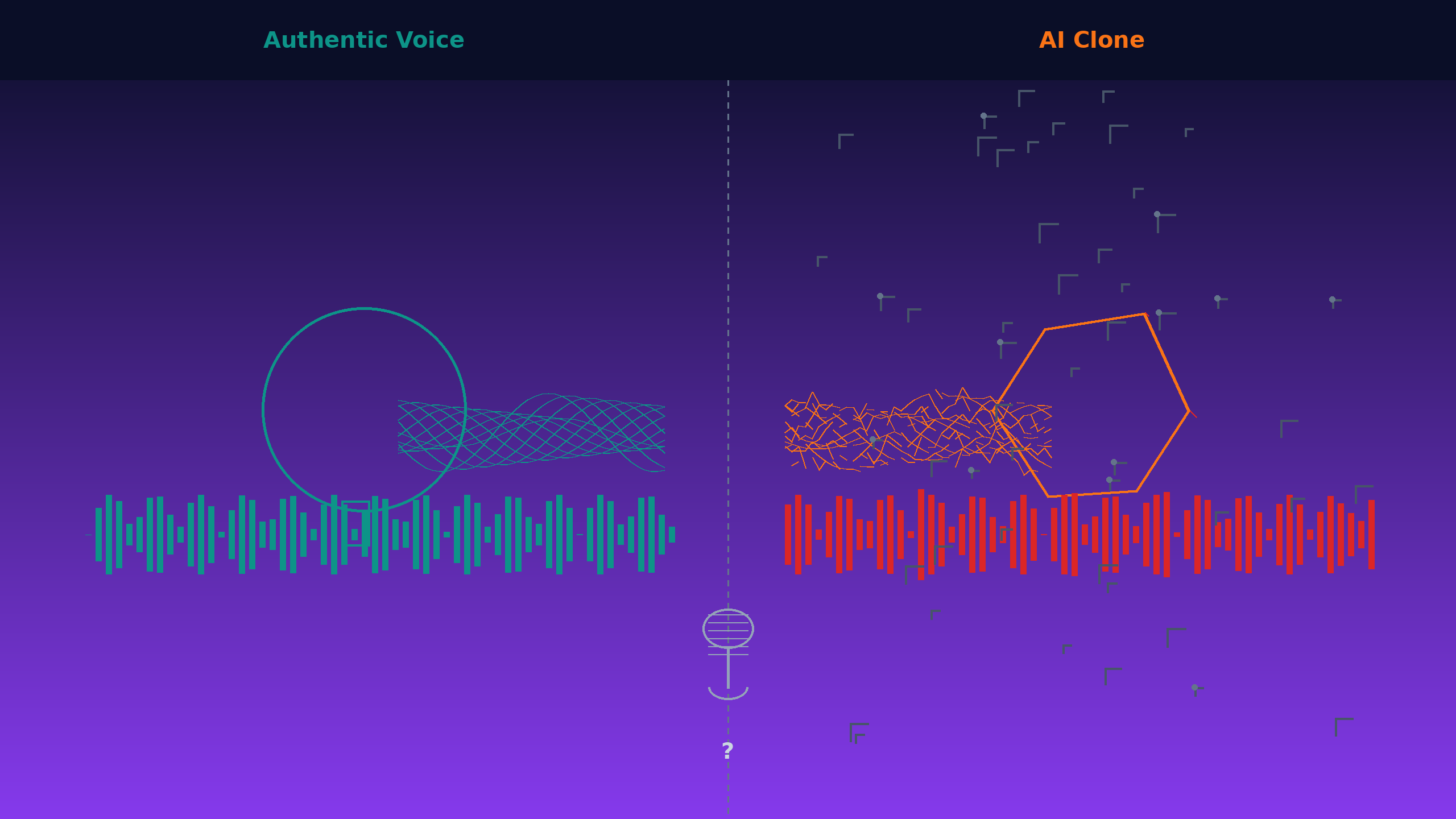

When David Greene listened to Google’s NotebookLM for the first time last fall, he experienced something deeply unsettling—hearing himself speak words he never said.

“I was, like, completely freaked out,” Greene told the Washington Post. “It’s this eerie moment where you feel like you’re listening to yourself.”

The former NPR Morning Edition host, whose warm baritone woke up 13 million Americans daily for eight years, is now suing Google in California Superior Court. He claims the tech giant replicated his distinctive voice for NotebookLM’s popular “Audio Overviews” feature—without asking permission or paying a cent.

Google denies it, claiming the voice belongs to a paid professional actor.

But here’s what should concern you, regardless of who’s telling the truth:

We now live in a world where AI can potentially capture and clone your voice convincingly enough that even your family can’t tell the difference. And the laws protecting you? They’re playing catch-up.

Think you’re safe because you’re not famous? Consider this:

Every time you say “Hey Google” or “Alexa,” you’re creating voice samples. Every customer service call. Every Zoom meeting. Every voice message. That data goes somewhere—and it’s increasingly being used to train AI voice models.

You might never know if your voice contributed to an AI system. You probably already “consented” in some terms of service agreement you didn’t read. And even if you found out, proving it and doing anything about it would be nearly impossible.

David Greene’s lawsuit matters because it forces the question: In the age of voice AI, who owns your voice? And what happens when technology moves faster than our ability to consent to how it’s used?

Your Voice Is Not Just Sound—It’s You

Think about what makes your voice yours. Not just the sound waves, but the pauses. The rhythm. The way you emphasize certain words. The little verbal tics you’ve tried to eliminate and can’t. The warmth (or edge) that comes through when you’re talking to someone you love (or can’t stand).

David Greene spent two decades crafting his voice into something distinctive. He grew up in Pittsburgh idolizing baseball announcer Lanny Frattare. In high school, he turned morning announcements into a radio show. He wrote his college application essay about wanting to be a public radio host. He learned the intimate art of speaking to millions as if talking to one friend.

“My voice is, like, the most important part of who I am,” Greene has said.

And he’s right—scientifically speaking.

Your voice is biometric data. Like your fingerprint. Like your iris scan. Like the unique pattern of blood vessels in your palm. Voice biometrics are increasingly used for authentication—your bank may use your voice to verify your identity. Some airports are experimenting with voice-based security. Your voice unlocks your phone.

This makes voice fundamentally different from, say, your hairstyle or fashion sense. It’s not something you chose or can easily change. It’s a biological signature, as unique as DNA, that you carry with you every time you open your mouth.

And unlike DNA, you spray it everywhere. Every phone call. Every voice memo. Every Zoom meeting. Every voice message to a friend. Every time you talk to Alexa, Siri, or Google Assistant.

Which raises an uncomfortable question: Who owns all those voice samples? And what can they do with them?

The NotebookLM Phenomenon: How an AI Podcast Tool Sparked a Voice Rights Lawsuit

Google’s NotebookLM seemed innocent enough when it launched in 2024. It’s an AI tool that transforms documents into conversational, podcast-style summaries. Upload a research paper, and two AI hosts—one male, one female—will banter their way through the key points, making dense material accessible.

The feature became a “sleeper hit” for Google in the AI arms race. By December 2024, Spotify had integrated it into Spotify Wrapped, offering personalized AI-hosted podcasts about your listening habits to millions of users.

People loved it. They also noticed something odd about the voices.

“So … I’m probably the 148th person to ask this,” a former colleague emailed Greene last fall, “but did you license your voice to Google? It sounds very much like you!”

Greene wasn’t the only one people thought they heard. Online speculation linked the voices to various podcasters and radio hosts. But the Greene comparisons kept piling up. His wife’s “eyes popped” when she heard it. Friends, family, and professional contacts reached out asking if he’d made a deal.

He hadn’t.

The 53% Confidence Problem: Why Proving Voice Theft Is So Hard

Greene’s lawsuit, filed in January 2026 with the powerhouse law firm Boies Schiller Flexner, makes several specific claims:

- Google replicated his distinctive voice without payment or permission- The AI mimics his cadence, intonation, and speech rhythms- It even copies his use of filler words—the “uhhs” and “likes” he’s worked for years to minimize

To support these claims, Greene’s team hired an AI forensic firm to analyze the artificial voice against recordings of the real Greene. Their conclusion: 53% to 60% confidence that Greene’s voice was used to train the model.

What does that percentage actually mean?

Think of it like a DNA match probability, but much less reliable. AI forensic analysts compare acoustic features—pitch patterns, vocal timbre, speech rhythm, pronunciation quirks. A 53-60% confidence level means “more likely than not, but far from certain.”

For AI voice analysis, experts say that’s actually “relatively high.” Why? Because AI-generated voices don’t contain direct copies of training data. They synthesize patterns learned from potentially thousands of voices. Detecting one specific voice contributor in that mix is like identifying one specific coffee bean in a blended cup.

And that’s the problem: the burden of proof falls on the victim.

We don’t have reliable forensic methods to determine whose voice went into training an AI. The major AI companies treat their training data as closely guarded trade secrets:

- Google won’t disclose exactly how it created NotebookLM’s voices- OpenAI won’t reveal what data trained ChatGPT’s voice features- These “black boxes” remain intentionally opaque

The practical barrier: Even if your voice was cloned, proving it requires:

- Suspecting it happened in the first place (How would you know?)2. Accessing the AI system to hear the similarity (If it’s behind a paywall or regional restriction?)3. Hiring expensive forensic experts (Most people can’t afford this)4. Overcoming the “it could be coincidence” defense

This asymmetry favors the AI companies. They have all the data about what went into their training. You have none. And they’re not volunteering it.

The Celebrity Precedents: From Bette Midler to Scarlett Johansson

Voice appropriation lawsuits aren’t new. What’s new is the AI dimension.

The Bette Midler Case: Midler v. Ford Motor Co. (1988)

Back in 1988, Bette Midler refused Ford Motor Company’s request to sing “Do You Want to Dance” for a commercial. Ford hired one of Midler’s former backup singers to imitate her distinctive style instead. The district court initially dismissed Midler’s case, finding no legal basis for her claim.

But the Ninth Circuit Court of Appeals reversed, establishing a landmark precedent. The court ruled that when a “distinctive voice of a professional singer is widely known” and is “deliberately imitated in order to sell a product, the sellers have appropriated what is not theirs.”

Critically, the court recognized that California law protects against appropriation of identity—and voice can be a component of identity as recognizable as a face.

This became the foundation for modern voice rights in America. It’s why advertisers can’t simply hire soundalikes for celebrity voices without consequences. It’s why you sometimes see the fine print: “Celebrity voice impersonated.”

Why this matters for Greene’s case: The legal principle from Midler requires proving (1) the voice is distinctive and widely known, and (2) it was deliberately appropriated. Greene’s challenge is demonstrating both elements apply to an AI-generated voice.

The Scarlett Johansson Wake-Up Call (May 2024)

Then came the incident that made headlines worldwide.

OpenAI CEO Sam Altman approached Scarlett Johansson nine months before launching GPT-4o’s voice features. He wanted to license her voice—not coincidentally, Johansson had voiced the AI companion in the 2013 film Her. She declined.

Two days before the product announcement, Altman tried again. Johansson hadn’t responded. OpenAI unveiled GPT-4o anyway, complete with a voice called “Sky” that sounded strikingly like Johansson.

After the announcement, Altman posted just one word on X: “Her.”

Johansson was not amused.

“I was shocked, angered and in disbelief that Mr. Altman would pursue a voice that sounded so eerily similar to mine that my closest friends and news outlets could not tell the difference,” she said in a statement.

OpenAI claimed Sky was based on a different professional actress and paused its use “out of respect for Ms. Johansson.” No lawsuit was filed. The voice disappeared. But the message was clear: AI companies were willing to push boundaries on voice appropriation, even when explicitly told “no.”

Why David Greene’s Case Is Different

Greene isn’t Bette Midler or Scarlett Johansson. He’s famous—but famous in a specific way. He’s a voice that millions recognized every morning without necessarily knowing his name or face.

This creates a fascinating legal question, as Cornell Law Professor James Grimmelmann notes: Courts must determine not just whether the voice sounds similar, but whether Greene is “famous enough for ordinary people to recognize it.”

There’s also the matter of harm. Midler lost a commercial opportunity. Johansson saw her likeness attached to a product she explicitly refused. Greene’s situation is more nuanced.

He’s concerned about his voice saying things he never would. “I read an article in the Guardian about how this podcast tool can be used to spread conspiracy theories and lend credibility to the nastier stuff in our society,” Greene told reporters. “For something that sounds like me to be used in service of that was really troubling.”

His former NPR colleague Mike Pesca put it more bluntly: “They used it to make the podcasting equivalent of AI ‘slop’… They have banter, but it’s very surface-level, un-insightful banter, and they’re always saying, ‘Yeah, that’s so interesting.’ It’s really bad.”

For someone who built a career on the art of thoughtful conversation, that association stings.

California’s New Voice Protection Laws

California, often the first mover on privacy issues, enacted two significant AI voice and likeness laws that took effect January 1, 2025.

AB 2602: Protecting Living Performers’ Digital Replicas

Assembly Bill 2602, signed by Governor Newsom in September 2024, prevents unauthorized use of digital replicas in place of live performances. Key provisions:

Contract Requirements: If you’re signing a contract that involves creating or using your digital replica, it must include:

- A “reasonably specific description” of the intended use- Either legal counsel representation with clear commercial terms you’ve signed, OR- Protection through a labor union collective bargaining agreement addressing digital replicas

Who It Protects: The law specifically targets performers—actors, voice artists, musicians—whose livelihoods depend on their voices and likenesses.

Why It Matters: Prevents studios from hiring you once, scanning your voice and likeness, then using your AI clone indefinitely without additional compensation or approval.

AB 1836: Protecting Deceased Persons’ Digital Replicas

Assembly Bill 1836 extends protection beyond the grave, granting estates control over a deceased person’s digital replica for 70 years after death.

Key Provisions:

- Companies cannot use a deceased person’s voice or likeness without estate authorization- Minimum statutory damages: greater of actual damages or $10,000 per violation- Fair use exemptions exist for news, documentary, satire, and parody

Real-World Impact: Want to use James Dean in a CGI film or recreate Marilyn Monroe’s voice for a commercial? You need estate permission—and likely a licensing fee.

The Consumer Gap: What About the Rest of Us?

Here’s the problem: These laws primarily protect performers and celebrities. What about ordinary people?

Consider these everyday scenarios where your voice is captured:

- Voice assistants: Every “Hey Siri,” “OK Google,” or “Alexa” command- Customer service calls: “This call may be recorded for quality assurance”- Video calls: Zoom, Teams, Google Meet recordings- Voice messages: Texts, voicemails, social media voice notes- Smart home devices: Doorbell cameras, home security systems- Car systems: Built-in voice navigation and calling

Tech companies have collected billions of hours of human speech to train AI models. Buried in the terms of service you quickly scrolled past: language allowing companies to use your interactions to “improve products and services.”

The uncomfortable truth: You’ve likely already “consented” to your voice being used for AI training—even though you had no idea that’s what you were agreeing to.

Why Greene can sue but you probably can’t:

- Greene is famous enough that people recognize the voice specifically as his- Under Midler v. Ford, voice rights require proving distinctiveness and recognition- If your voice was mixed into training data with millions of others, proving it was used is nearly impossible- Even if you could prove it, you may have contractually waived your rights in some forgotten TOS agreement

This is the regulatory gap: California’s new laws protect professional performers, but offer little recourse for the average person whose voice ends up in an AI training dataset.

The “Paid Actor” Defense and What It Really Means

Google’s response to Greene’s lawsuit is straightforward: “The sound of the male voice in NotebookLM’s Audio Overviews is based on a paid professional actor Google hired.”

This defense, if true, would be entirely legitimate. Companies have every right to hire voice actors and use their voices in AI systems—provided they do so with proper contracts and compensation.

But it raises important follow-up questions:

Who is this actor? Google hasn’t identified them publicly (likely due to privacy concerns and to avoid harassment).

What did they agree to? Did their contract fully disclose that their voice would be used to create an AI that could generate unlimited new content? Were they compensated appropriately for that scope of use?

Are they comfortable with the outcome? If people consistently mistake the AI voice for David Greene’s, that might concern the actual voice actor whose work is being misidentified.

The voice acting industry context:

The voice acting profession has been significantly disrupted by AI. Many actors signed contracts years ago—before AI voice synthesis was viable—that granted broad rights to their recordings. Some have since discovered their voices being used in AI applications without additional compensation or approval beyond their original session fee.

This has led to industry-wide concerns about whether traditional voice work contracts adequately address AI use, and whether actors fully understood what they were agreeing to before the technology existed.

California’s AB 2602 was designed specifically to address this issue going forward, requiring contracts to spell out digital replica uses explicitly.

The “archetypal voice” question:

Adam Eisgrau, AI Copyright Policy Director at the Chamber of Progress, outlined the core legal question: “If a California jury finds that the voice of NotebookLM is fully Mr. Greene’s, he may win. If they find that it’s got attributes he also possesses, but is fundamentally an archetypal anchorperson’s tone and delivery it learned from a large dataset, he may not.”

In other words: Is there such a thing as a “generic warm male podcaster voice”?

Think about it: Professional broadcasters are often trained to speak in similar ways. Clear enunciation. Moderate pace. Warm but authoritative tone. Minimal regional accent. These are industry standards, not individual trademarks.

If an AI learns these general qualities from training data, is that different from a voice acting student learning them in class?

The legitimate AI use case:

It’s worth acknowledging: Voice AI has valuable, non-exploitative applications:

- Accessibility tools that give voice to people who’ve lost their speech- Language learning apps that provide natural conversation practice- Audiobook narration that makes more content accessible- Customer service systems that can handle routine queries 24/7

The technology itself isn’t the villain. The question is whether it’s being developed and deployed ethically, with proper consent and compensation for the humans whose voices make it possible.

What This Means for You: Why Every Voice Assistant User Should Pay Attention

David Greene has the resources and recognition to fight Google in court. Most of us don’t. But his case illuminates risks we all face in our daily lives:

1. Your Voice Is Already Captured—More Than You Realize

Every interaction with voice-enabled technology creates data that companies store and analyze:

Daily voice capture:

- Those “Hey Siri” wake-word samples- Google Assistant queries (“What’s the weather?”)- Alexa commands (“Play music”)- Dictated text messages- Voice-to-text searches- Customer service calls- Smart doorbell conversations- Car navigation voice commands

Where it goes:

- Stored on company servers (often indefinitely unless you manually delete)- Used to “improve services” (which may include AI training)- Sometimes reviewed by human contractors for quality assurance- Potentially shared with third-party partners under broad data-sharing agreements

The scale: Amazon alone processes billions of Alexa voice requests per year. Google Assistant handles similar volumes. That’s an enormous reservoir of human voice data, most of it collected from ordinary people who never considered how it might be used.

2. “Consent” Was Never Meaningful

Remember clicking “I Agree” on those terms of service?

What you probably thought you agreed to: “They’ll store my voice commands to make the service work better.”

What you may have actually agreed to: Language like this (from actual Google Terms):

- “We use information we collect… to provide, maintain, protect and improve our services, to develop new ones…”- “We may use automated systems to analyze your content…”

The bait-and-switch: These terms were written to be legally broad enough to cover future uses—including AI training—that consumers couldn’t have anticipated when they agreed.

Courts are beginning to question whether this constitutes meaningful informed consent. But for now, you’ve likely already signed away rights you didn’t know you were giving up.

3. Detection Is Nearly Impossible Without Resources

Even with David Greene’s resources, expert analysis could only reach 53-60% confidence. For the average person:

The detection problem:

- You probably wouldn’t even know your voice was cloned (Who systematically listens to new AI voices to check?)- If you suspected it, you’d need expensive forensic analysis- Even forensics may not provide conclusive proof- The burden of proof is entirely on you, not the company

The asymmetry:

- AI companies know exactly what training data they used- They have no legal obligation to tell you- They can claim trade secret protection to avoid disclosure- You have no way to audit their training datasets

4. The Law Is Playing Catch-Up—And May Never Catch Up

What’s protected now:

- Professional performers in California (AB 2602, AB 1836)- Celebrity voices under common law right of publicity- Deceased persons’ voices (in California, for 70 years)

What’s NOT clearly protected:

- Ordinary people’s voices used in training data- “Inspired by” or “in the style of” voices that aren’t direct copies- Voices captured with broad TOS consent- Aggregate use of many voices together

The regulatory vacuum:

- Federal legislation has been proposed but not enacted- Most states have no AI-specific voice protection laws- International coordination is minimal- Technology is advancing faster than legal frameworks

Reality check: By the time laws catch up, AI voice technology may be so ubiquitous that retroactive protection is meaningless. The voices will already be in the models.

Protecting Your Voice in the Age of AI: Practical Steps You Can Take Today

You can’t completely prevent voice capture in the modern world. But you can be more deliberate about controlling your voice data:

1. Audit Your Voice Assistant Settings (Do This Now)

Google Assistant:

- Visit

myactivity.google.com/myactivity- Click “Voice & Audio activity”- Delete past recordings and turn off future storage if desired- Settings → Google Assistant → disable “Voice Match” if you don’t need hands-free activation

Amazon Alexa:

- Alexa app → Settings → Alexa Privacy → Review Voice History- Enable “Don’t save recordings” or set auto-delete to 3 months- Turn off “Help Improve Amazon Services” to opt out of human review

Apple Siri:

- Settings → Siri & Search → disable “Listen for ‘Hey Siri’”- Settings → Privacy → Analytics → disable “Share Siri & Dictation”- Settings → Siri & Search → Siri History → Delete Siri & Dictation History

2. Read the Fine Print (Especially for These)

Before participating in voice-enabled services, look for these red flags in privacy policies:

Warning phrases:

- “We may use your data to train machine learning models”- “We use your interactions to improve our services and develop new features”- “We may share anonymized voice data with third parties”

High-risk situations:

- Voice-enabled surveys or market research- New AI apps requesting microphone access- “Free” transcription or voice-to-text services- Beta testing programs for voice features

Questions to ask:

- Will my voice recordings be used for AI training?- Can I opt out of AI training while still using the service?- How long are recordings stored?- Will you notify me if this policy changes?

3. Minimize Your Voice Data Footprint

Simple actions:

- Use push-to-talk instead of “always listening” mode- Disable voice features you don’t actually use- Type instead of dictate when practical- Use wired headphones for private calls (many wireless earbuds send data to manufacturers)- Decline voice-based customer service when you have the option to chat or email

For video calls:

- Ask before recording; if someone else is recording, your voice enters their data ecosystem- Use end-to-end encrypted platforms when discussing sensitive topics (Signal, FaceTime)- Be cautious about Zoom AI features like automatic transcription or meeting summaries

4. Document Your Voice (If You Use It Professionally)

If you’re a podcaster, voice actor, content creator, or public speaker:

- Maintain dated recordings of your natural speaking voice- Document your vocal characteristics (pitch range, speaking pace, signature phrases)- Register copyrighted works that feature your voice- Consider this evidence if you ever need to prove voice misappropriation

5. Support Stronger Privacy Laws

The Greene case may influence pending federal legislation. Consumer advocacy matters:

- Contact your representatives about AI voice protection laws- Support organizations advocating for digital rights (EFF, Consumer Reports, EPIC)- Comment on proposed regulations when public comment periods open- Share information about voice privacy with your networks

Pending federal legislation to watch:

- NO FAKES Act (Nurture Originals, Foster Art, and Keep Entertainment Safe)- Various AI transparency and consent bills in committee

6. Know Your Rights (State-by-State)

If you live in California:

- You have rights under CCPA to request what voice data companies have collected about you- You can demand deletion of your data- If you’re a performer, AB 2602 gives you contract protections

If you live elsewhere:

- Check if your state has biometric privacy laws (Illinois, Texas, Washington have strong protections)- Voice data is often classified as “biometric information” under these laws- You may have the right to sue for unauthorized biometric data collection

7. Assume Recording in Professional Contexts

Be especially cautious when:

- Participating in customer service calls (“this call may be monitored…”)- Speaking at recorded events or webinars- Being interviewed for podcasts or video content- Using work-provided devices or platforms (your employer likely owns the data)- Participating in beta tests for AI features

Ask explicitly:

- “Will this recording be used for AI training?”- “Can I opt out of AI training while still participating?”- “Who has access to this voice data?”

The uncomfortable reality: Once your voice enters a dataset, it’s nearly impossible to remove. Prevention is your only practical protection.

The Bigger Picture: This Is About More Than One Lawsuit

David Greene doesn’t describe himself as an anti-AI activist. “I’m not some crazy anti-AI activist,” he told reporters. “It’s just been a very weird experience.”

He’s not asking for innovation to stop. He’s asking for something that should be simple: “Google should have asked permission.”

That’s the heart of this case—and the heart of the privacy challenge AI presents.

The race to train AI has created an “ask forgiveness, not permission” culture:

- Companies harvest human data at massive scale- Training datasets are kept secret- Consent is buried in incomprehensible terms of service- Compensation is rarely offered to the people whose data powers the AI- By the time anyone objects, the models are already trained and deployed

This pattern extends beyond voices. It includes writing, art, photography, code, personal communications—the sum total of human creative and communicative output, absorbed into systems that can now generate convincing imitations.

The technology is remarkable. The ethics are murky. The laws are scrambling to keep up.

What’s Really at Stake

Whether Greene wins or loses, his lawsuit forces a conversation we desperately need to have:

In a world where AI can clone your voice, your face, your writing style—what rights do you have to your own identity?

Your voice is not just sound. It’s you:

- Biometric data as unique as your fingerprint, carrying patterns developed over a lifetime- How your children recognize you on the phone before you say your name- How your partner knows your mood from your tone- How the world experiences who you are

Should tech companies be able to capture it, synthesize it, and profit from it—without even asking? Without compensating you? Without giving you any control over how it’s used?

David Greene says no. The courts will decide his case. But the question it raises belongs to all of us.

Why This Lawsuit Matters for Every Consumer

Even if you’re not David Greene, this case could set precedents that affect your rights:

If Greene wins:

- Courts may establish clearer standards for what constitutes voice theft- AI companies may be required to be more transparent about training data- Discovery could reveal how major tech companies actually build their voice AI- Other individuals may have legal grounds to challenge voice cloning

If Greene loses:

- It may signal that AI voice synthesis is too different from traditional voice impersonation for existing laws to cover- The burden of proof may be too high for most people to ever successfully challenge voice cloning- It could embolden AI companies to be even less cautious about whose voices they use

Either way:

- The case will generate public awareness about voice data privacy- It may spur stronger legislation at the state and federal level- It puts pressure on AI companies to develop more ethical practices- It reminds us all that we have a voice (pun intended) in how this technology develops

You’re Not Powerless

Yes, the technology is already here. Yes, your voice data is probably already out there. Yes, the legal protections are inadequate.

But consumer awareness and action still matter:

The Scarlett Johansson incident showed that public pressure works—OpenAI pulled the “Sky” voice within days. Companies care about their reputation. Legislators respond to constituent concerns. The future of voice AI isn’t written yet.

What you can do:

- Take the practical steps outlined in this article to minimize your voice data footprint- Support stronger privacy legislation- Demand transparency from companies about AI training data- Make informed choices about which voice-enabled services you use- Share information with others who may not realize these risks

Technology doesn’t develop on its own. It reflects the choices companies make and the standards we demand.

David Greene is standing up for the principle that your voice is yours. Whether you’re a famous broadcaster or someone who just wants to use Alexa without feeding an AI training dataset, that principle matters.

The question isn’t whether AI voice technology will exist—it already does. The question is whether it will develop with respect for human rights and dignity, or whether those will be treated as obstacles to innovation.

That’s a question worth raising your voice about.

What Comes Next

The Greene v. Google case is in early stages. Discovery—where Google might be compelled to reveal how NotebookLM’s voices were actually created—could prove decisive. If the case proceeds to trial, it could set precedent for how courts evaluate AI voice similarity claims.

Meanwhile, watch for:

- Federal legislation: Multiple bills addressing AI and creative rights are pending in Congress- Industry self-regulation: After the Johansson incident, some AI companies published voice ethics guidelines- More lawsuits: Greene likely won’t be the last person to recognize themselves in an AI voice

The age of synthetic media is here. The question is whether we’ll shape it deliberately—or let it shape us.

Have you encountered AI voices that sounded familiar? Concerned about your voice data? Share your thoughts at privacy@myprivacy.blog.