A 5-5 tie vote on Maine’s Legislative Council prevents legislation criminalizing AI-generated child exploitation from even reaching public debate, exposing a dangerous legal loophole that leaves children vulnerable

🎧 Related Podcast Episode

Executive Summary

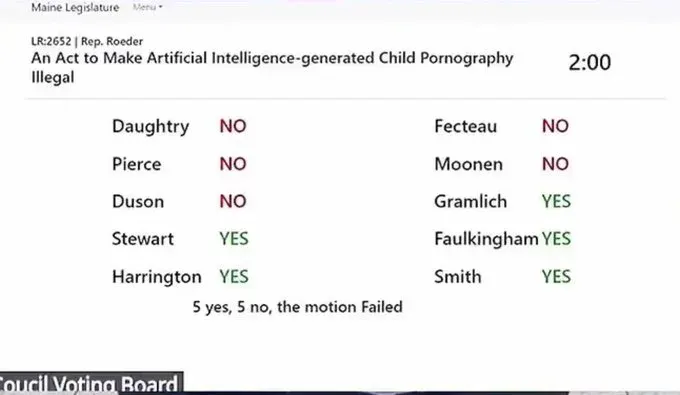

On October 23, 2025, Maine’s Legislative Council voted 5-5 against advancing “An Act to Make Artificial Intelligence-Generated Child Pornography Illegal” (LR-2652), effectively killing the proposed legislation before it could reach committee consideration. The tie vote, which split along largely partisan lines, prevented the bill from receiving public hearings or debate during the upcoming legislative session. This decision has sparked fierce controversy, with Republicans accusing Democrats of prioritizing procedure over child protection, while Democrats maintain they rejected a duplicative bill in favor of existing legislative efforts. Meanwhile, Maine law enforcement reports they cannot investigate known cases of AI-generated child sexual abuse material, making Maine an outlier among U.S. states as deepfake technology becomes increasingly sophisticated and accessible.

The Vote That Shocked Maine

The Legislative Council Decision

The Maine Legislative Council—composed of ten legislative leaders from both parties—holds significant power over which bills advance to committee consideration during the state’s second legislative session. Under Maine’s constitutional structure, the second session is limited to budgetary matters, the governor’s legislation, emergency legislation approved by the Legislative Council, legislation from authorized studies, and direct initiative petitions.

On October 23, 2025, the Council voted on whether to admit LR-2652, sponsored by Democratic Representative Amy Roeder of Bangor, for consideration. The vote split 5-5, with all four Republican members and one Democrat, Representative Lori Gramlich of Old Orchard Beach, voting to advance the bill. The remaining five Democrats—including House Speaker Ryan Fecteau of Biddeford, Senate President Mattie Daughtry, House Majority Leader Maureen Terry Pierce, Senate Majority Leader Eloise Vitelli Duson, and Assistant House Majority Leader Kristen Cloutier Moonen—voted against admitting the bill.

On October 23, 2025, the Council voted on whether to admit LR-2652, sponsored by Democratic Representative Amy Roeder of Bangor, for consideration. The vote split 5-5, with all four Republican members and one Democrat, Representative Lori Gramlich of Old Orchard Beach, voting to advance the bill. The remaining five Democrats—including House Speaker Ryan Fecteau of Biddeford, Senate President Mattie Daughtry, House Majority Leader Maureen Terry Pierce, Senate Majority Leader Eloise Vitelli Duson, and Assistant House Majority Leader Kristen Cloutier Moonen—voted against admitting the bill.

The 5-5 Legislative Council vote that killed Maine’s AI child pornography bill from reaching committee consideration

Under Legislative Council rules, a tie vote means the motion fails. The bill would not advance to committee, would not receive public hearings, and would not be debated by the full legislature.

Republican Outrage and Disbelief

Republican House Minority Leader Billy Bob Faulkingham expressed shock at the outcome in a video that quickly went viral on social media.

“Well, that was interesting. Just got out of leg council today where I’m in Augusta. We were voting on bills — but the one that really blew my mind was a bill sponsored by a Democrat that would prohibit child pornography created by AI,” Faulkingham stated. “And of course, even though a Democrat sponsored that bill, I voted yes for it because I think child pornography is absolutely disgusting no matter how it’s created.”

“Unfortunately, that bill failed to get entered into the second session because the majority of Democrats voted against letting that bill in, even though it was sponsored by a Democrat. I don’t understand the reasoning why you would not want to go against child pornography, but that actually happened today, so it doesn’t really leave me with a lot of optimism for the second session.”

Senate Minority Leader Trey Stewart issued a scathing statement criticizing the Democratic majority: “It’s pretty insane to me that they’d prioritize naming bridges and roads over protecting kids from having their lives ripped apart by pedophiles with AI… but that seems to be the common theme among the entire Democratic Party right now.”

Representative Katrina Smith, a Republican from Palermo who also sits on the Legislative Council, echoed the frustration: “I was just shocked. I was surprised, I mean, I think it’s something that is very valid. I think it is an emergency based on that. It’s protecting children.”

The Democratic Defense: “It’s Not What You Think”

Claims of Legislative Duplication

Democrats who voted against admitting the bill maintain their decision was procedural rather than substantive, arguing that an existing bill already addresses the issue and that introducing a second bill would create unnecessary duplication and confusion.

Representative Amy Kuhn, Democratic House chair of the Judiciary Committee and a key figure in Maine’s AI and privacy legislation efforts, explained: “Leadership members who recalled that the Judiciary Committee is already working on this declined to admit a duplicative title. Members who did not recall the judiciary is already working on this, voted it in, and then took it to social media.”

The sponsor of the rejected bill, Representative Amy Roeder herself, took to social media to defend the Democratic vote: “I’m guessing someone wanted to stir up ire and outrage that Democrats voted against something like this, but oh…the truth is legitimately SO BORING. There’s already a bill in the legislature that is much more substantive and is being worked by the very capable Judiciary Committee.”

Roeder added: “As soon as the bill title list came out, she and I conferred on it and agreed that it made sense for the Judiciary Committee to continue the work that they had already started. Instead of bringing up a new bill.”

Representative Kuhn characterized Republican criticism as politically motivated: “This is a distraction from the fact that Republican leadership voted against admitting numerous titles that would help Maine people.”

The Bill Democrats Reference: LD 1944

The existing legislation Democrats cite is LD 1944, “An Act to Protect Individuals from the Threatened Unauthorized Dissemination of Certain Private Images, Including Artificially-generated Private Images,” originally proposed by Representative Kuhn during the previous legislative session.

When initially introduced, LD 1944 contained two distinct components:

- Child pornography provisions: Updates to Maine’s child pornography laws to include AI-generated images (proposed and drafted by the Maine Prosecutors’ Association)2. Revenge porn provisions: Incorporation of nonconsensual AI-generated images into Maine’s current revenge porn statute

However, this is where the Democratic defense begins to crumble under scrutiny.

The Inconvenient Truth: LD 1944 Doesn’t Actually Protect Children

What Really Happened to LD 1944

While Democrats claim that existing legislation already addresses AI-generated child sexual abuse material, investigative reporting reveals a more troubling reality: the child protection provisions were stripped from LD 1944 before it became law.

According to testimony and reporting from The Maine Monitor, lawmakers on the Judiciary Committee raised concerns about how the proposed child pornography language could create constitutional issues. Representative Kuhn agreed to drop that portion of the bill entirely.

The version of LD 1944 that Governor Janet Mills signed into law in summer 2025 only addressed the revenge porn component—expanding the state’s pre-existing law to include dissemination of altered or “morphed images” as a form of harassment for adults. Critically, it did not label morphed images of children as child sexual abuse material.

This means that contrary to Democratic claims, there is currently no Maine law—neither pending nor enacted—that criminalizes the creation or possession of AI-generated child sexual abuse material.

Law Enforcement Confirms: We Cannot Investigate These Cases

Maine State Police Lieutenant Jason Richards, who oversees the Computer Crimes Unit and commands the Northern New England Internet Crimes Against Children Task Force, testified about the real-world consequences of this legal gap.

In his testimony supporting the original LD 1944, Richards described a disturbing scenario: “Imagine someone taking pictures of your thirteen-year-old child at a soccer game or cheerleading event only to use AI technology to create sexually explicit images or videos of them.”

This wasn’t a hypothetical. Richards confirmed that a Maine man went to a children’s soccer game, photographed kids playing, then went home and used artificial intelligence to transform those innocent photos into sexually explicit images. “Police know who he is,” Richards stated. “But there is nothing they could do because the images are legal to have under state law.”

Richards testified: “I field calls regularly from concerned prosecutors, police officers and parents from around our state posing two scenarios ever present with this material. Either a person has altered an image of their child to create an obscene image or video that is now out in the world to be forever traded and sold or the created image is being used to extort the child in some manner. In either case, I have to tell them there is nothing we can do as these scenarios are not a violation of Maine law.”

The scale of the problem is growing exponentially. In 2020, Richards’ team received 700 tips relating to child sexual abuse materials and reports of adults sexually exploiting minors online in Maine. By the end of 2025, Richards expects more than 3,000 tips—but his team can only investigate about 14% in any given year. Under current law, they must discard any material that involves AI.

“It’s not what could happen, it is happening, and this is not material that anyone is OK with in that it should be criminalized,” said Shira Burns, executive director of the Maine Prosecutors’ Association.

This legal loophole exists even as criminal networks actively exploit children online, using sophisticated techniques to create and distribute exploitative material.

The National Context: Maine as an Outlier

The Deepfake Explosion

The rapid advancement of generative AI and deepfake technology has created unprecedented challenges for child protection. As Lieutenant Richards noted, two years ago it was easy to discern when material had been produced by AI. Today, it’s nearly impossible without extensive forensic experience.

AI can take a fully clothed picture of a child and make the child appear naked in a “deepfake” image. People also train AI models on child sexual abuse materials that are already online, creating entirely synthetic but realistic content.

The South Korea Crisis: A Warning to the World

The scale of this problem became horrifyingly clear in South Korea in 2024, where more than 500 schools and universities were targeted in a coordinated wave of deepfake sexual abuse. AI-generated sexualized images of students—mostly girls—were circulated in encrypted Telegram groups, with perpetrators often being classmates of the victims.

The crisis wasn’t happening on the dark web—it was occurring on mainstream platforms used by millions. More than 80% of those arrested were teenagers, many described as “normal boys” by their teachers who had never shown signs of violent behavior before.

South Korean data revealed alarming statistics:

- Nearly 60% of deepfake sex crime victims were minors- 297 deepfake sexual exploitation crimes were reported between January and July 2024 alone- One Telegram chatroom had over 220,000 members- Over 230 middle schools, high schools, and universities were identified on a list of “Schools of Deepfake Telegram Victims”

The abuse was gamified, with users earning rewards for inviting friends, sharing images, and escalating harm. Victims often had to continue attending school with perpetrators in the same classrooms, causing immense psychological trauma.

In response, South Korea dramatically increased criminal penalties, raising the maximum prison term from five to seven years for creating and distributing sexually explicit deepfake materials. The country also criminalized possession, purchase, storage, and even viewing of deepfake pornography. In October 2024, a South Korean court handed down a ten-year prison sentence to a perpetrator who created and distributed 1,852 deepfake photos and videos.

This crisis demonstrates what can happen when legal frameworks fail to keep pace with technology. Maine currently faces the same vulnerability that South Korea experienced before its legal reforms.

At the end of 2024, the National Center for Missing and Exploited Children reported a 1,325% increase in the number of tips it received related to AI-generated child sexual abuse materials compared to the previous year. This material is becoming more commonly found when investigating cases of possession of child sexual abuse materials.

The problem has already created a national crisis in South Korea, where more than 500 schools were targeted in 2024 by deepfake sexual abuse operations primarily conducted by teenagers. Nearly 60% of the victims were minors, and the scandal prompted emergency legislation and nationwide protests.

In September 2025, a former Maine state probation officer pleaded guilty to accessing child sexual abuse materials in federal court. When federal investigators searched the man’s Kik account, they found he had sought out the content and possessed at least one image that was “AI-generated,” according to court documents.

How Other States Are Responding

While Maine debates procedural questions, other states have taken decisive action. According to MultiState, a government relations firm tracking AI legislation:

- 43 states have created laws outlawing sexual deepfakes- 28 states have banned the creation of AI-generated child sexual abuse material- 22 states have done both

States that have enacted comprehensive protections include:

California: Multiple laws addressing AI transparency, bias, and harmful content, with specific provisions for child protection

Colorado: The comprehensive Colorado AI Act (SB 24-205) addresses high-risk AI systems with duties of reasonable care and impact assessments

Iowa: HF 2240 criminalizes the creation of sexually explicit images of children using AI

South Dakota: S 79 similarly bans AI-generated child sexual abuse material

Texas: Comprehensive AI regulations including provisions for high-risk AI systems

Maine’s failure to enact similar protections places it among a shrinking minority of states that have not updated their laws to address this rapidly evolving threat.

International Approaches

The debate in Maine contrasts sharply with aggressive action in other democracies. European countries have been grappling with similar issues, though not without controversy.

The EU’s proposed Chat Control regulation, which would have mandated mass scanning for child sexual abuse material, was defeated in September 2025 after privacy advocates argued it would create dangerous surveillance infrastructure. However, that debate centered on the method of detection—mass surveillance versus targeted investigation—not whether the content itself should be criminalized.

Even privacy advocates who opposed Chat Control acknowledged that AI-generated child sexual abuse material should be illegal. The question was how to detect it without creating a surveillance state, not whether creating it should be a crime.

China has implemented strict regulations on deepfake technologies and synthetic media, including specific provisions addressing harmful AI-generated content.

Academic and Expert Consensus

Riana Pfefferkorn, a policy fellow at the Stanford Institute for Human-Centered AI who studies abusive uses of AI and the intersection of legislation and the Constitution, said Maine’s position is unusual.

“It’s a bipartisan area of interest to protect children online, and nobody wants to be the person sticking their hand up and very publicly saying, ‘I oppose this bill that would essentially better protect children online,’” Pfefferkorn noted.

She pointed out that morphed images of children are already banned under federal law, and there is established case law that Maine could reference in drafting its own legislation. “Most legislatures that have considered changing pre-existing laws on child sexual abuse material have at least added that morphed images of children should be considered sexually explicit material,” she said.

While federal agencies have a role in investigating these cases, they typically handle only the most serious ones. It falls primarily on states to police sexually explicit materials within their jurisdictions.

The Constitutional Question: Real or Convenient?

Why Were Child Protection Provisions Dropped?

Democrats claim the child pornography provisions were removed from LD 1944 due to constitutional concerns, but this explanation raises more questions than it answers.

The First Amendment protects speech, including some forms of offensive or distasteful content. However, child pornography has long been recognized as a category of speech that receives no First Amendment protection. In New York v. Ferber (1982), the Supreme Court held that child pornography could be banned even if it did not meet the legal definition of obscenity because of the compelling government interest in protecting children from sexual exploitation.

The constitutional question with AI-generated child sexual abuse material centers on whether it can be banned when no actual child was directly harmed in its creation. The Supreme Court addressed this in Ashcroft v. Free Speech Coalition (2002), striking down provisions of the Child Pornography Prevention Act that banned “virtual child pornography” because the law was written too broadly.

However, the Supreme Court explicitly left room for more narrowly tailored laws. Subsequently, Congress passed the PROTECT Act of 2003, which criminalized obscene visual representations of the sexual abuse of children, including computer-generated images that are “indistinguishable from” images of real children. Federal courts have consistently upheld these provisions.

More than two dozen states have successfully enacted laws banning AI-generated child sexual abuse material, using language that draws on federal precedent and case law. These laws focus on:

- Images that are indistinguishable from real children2. Images created using photos or likenesses of real, identifiable children3. Obscene visual representations regardless of creation method

Was the Constitutional Concern Legitimate?

Maine’s Judiciary Committee’s constitutional concerns may have had some basis if the original draft was poorly worded. However, the solution to constitutional concerns is typically to amend problematic language, not to abandon child protection entirely.

Representative Kuhn has indicated she plans to reintroduce the child protection provisions “mostly unchanged from her early version” when the Legislature reconvenes in January 2026. If the language was constitutionally problematic before, why would reintroducing it unchanged address those concerns?

This inconsistency suggests that either:

- The constitutional concerns were overstated or could have been addressed through amendments2. The decision to drop the provisions was influenced by other factors beyond constitutional law3. Political considerations or time constraints played a larger role than legal analysis

Multiple states with similar constitutional frameworks have successfully enacted these protections, demonstrating that constitutional obstacles can be overcome with proper drafting.

The Procedural Defense Falls Apart

Why “Avoiding Duplication” Doesn’t Hold Water

Democrats argue they rejected Representative Roeder’s new bill to avoid duplication with existing Judiciary Committee work. However, this defense collapses under scrutiny:

Problem 1: There Is No Existing Bill The LD 1944 that passed into law does not contain child protection provisions. Democrats cannot claim they’re avoiding duplication with a bill that doesn’t address the issue.

Problem 2: The Work Was Already Abandoned The Judiciary Committee dropped the child protection provisions from LD 1944 during the previous session. If the committee believed this issue should be addressed, why did they remove those provisions rather than refining the language?

Problem 3: Reintroduction Plans Acknowledge the Gap Representative Kuhn has publicly stated she plans to reintroduce child protection provisions in January 2026. This admission proves there is currently no pending legislation that addresses the issue—otherwise, why would she need to reintroduce it?

Problem 4: Duplicative Bills Are Common Maine’s legislative process regularly handles multiple bills on similar topics. Committees routinely consolidate similar legislation or work on parallel bills that address different aspects of complex issues. If Democrats believed Roeder’s bill was duplicative but well-intentioned, the appropriate response would have been to admit it and let the Judiciary Committee consolidate it with their work.

Problem 5: Emergency Designation Was Warranted Maine’s second legislative session is limited to emergency legislation. Given that:

- Law enforcement cannot currently investigate known cases- The problem is rapidly escalating (1,325% increase in reports)- More than two dozen other states have already acted- Real Maine children are being victimized

…a strong argument exists that this legislation meets the “emergency” threshold. The Legislative Council’s role is to determine if legislation qualifies as emergency, not to make judgments about whether the committee work is “duplicative.”

The Political Optics Problem

The Democratic defense suffers from a fundamental political reality: explaining why you voted against a bill to ban AI-generated child pornography requires a much longer, more complex explanation than the Republican attack.

In politics, if you’re explaining, you’re losing. Republicans can say: “Democrats voted against banning AI child porn.” Democrats must respond: “Well, actually, there’s an existing bill that was modified, and the provisions were removed due to constitutional concerns, but we plan to reintroduce them, and this was really about avoiding duplication…”

By the time Democrats finish their explanation, most voters have tuned out. The initial headline and social media posts have already done their damage.

This political miscalculation suggests either:

- Democrats genuinely didn’t anticipate the public reaction2. They prioritized internal legislative procedure over public perception3. There were other unstated reasons for the vote

What the Legislative Council Actually Does

Understanding Maine’s Gatekeeping Process

To understand why this vote matters so much, it’s important to understand the Legislative Council’s role in Maine’s government structure.

Maine operates on a biennial legislative schedule, with each session lasting two years. The first regular session covers a broad range of topics without formal limitations on bill submissions. The second regular session, however, is constitutionally limited to:

- Budgetary matters- The Governor’s legislation- Emergency legislation approved by the Legislative Council- Legislation resulting from authorized studies- Legislation initiated by direct initiative petition

The Legislative Council serves as the gatekeeper for what qualifies as “emergency legislation” during the second session. The Council consists of ten members:

- The President of the Senate- The Speaker of the House- The Democratic and Republican floor leaders from both chambers- The Democratic and Republican assistant floor leaders from both chambers

Since Democrats currently control both chambers of Maine’s legislature, they hold six of the ten Legislative Council seats, giving them effective control over which bills advance.

The “Emergency” Standard

Maine law does not provide a rigid definition of what constitutes an “emergency” worthy of Legislative Council approval. This flexibility allows the Council to respond to genuine crises but also creates opportunities for partisan gamesmanship.

Reasonable people can disagree about whether any specific bill meets the emergency threshold. However, when law enforcement testifies that they cannot investigate known cases of child exploitation, when the problem is rapidly escalating, and when more than half of U.S. states have already updated their laws, the argument for emergency status becomes compelling.

The Legislative Council approved 84 bills for consideration in the second session. These included various matters that many observers would not consider emergencies in the traditional sense. The selectivity of what qualified as an “emergency” opened Democrats to charges of inconsistency.

The Public Hearing That Never Happened

By voting against admitting Representative Roeder’s bill, the Legislative Council ensured that:

- No public hearing would be held where parents, law enforcement, prosecutors, and civil liberties advocates could testify2. No committee work session would allow legislators to refine problematic language3. No debate would occur on the House or Senate floor4. No amendments could be proposed to address constitutional concerns5. No vote would be taken that would put each legislator on record

This complete shutdown of the legislative process means Maine voters cannot know where their individual representatives stand on this issue. The five Democrats who voted “no” on the Legislative Council bear the political responsibility, but other legislators never had the opportunity to vote.

This procedural outcome particularly frustrated Republicans because it prevented them from forcing a floor vote that would have required every Democratic legislator to publicly vote for or against banning AI-generated child pornography.

The Public Backlash and Grassroots Response

The Change.org Petition

A Change.org petition emerged rapidly after the vote, demanding that legislators “revisit and pass legislation that will criminalize AI-generated child pornography and ensure those who create or distribute such content are held accountable.”

The petition specifically references the soccer game incident: “On or around September 14th a Maine man went to a youth soccer game and took photos of the children playing soccer. He later went home and had AI turn them into exploitive images. Commanding Officer of the Computer Crimes Unit, LT. Richards bluntly told us there was nothing law enforcement could do because of the way the current law is written.”

The petition criticizes LD 1944’s limitations: “LD 1944 ‘An Act to Protect Individuals from the Threatened Unauthorized Dissemination of Certain Private Images, Including Artificially-generated Private Images’ is the current Bill that addresses this issue but its language does not hold perpetrators accountable with AI-generated images nor deep fakes, specifically when these images depict children.”

Petitioners note that other states, including California, “have made proper updates and amendments to their legislature ensuring children are protected from this new-age sexual exploitation,” and encourage Maine to follow suit.

Social Media Firestorm

The vote generated significant attention on social media platforms, with Representative Faulkingham’s video explanation going viral. Conservative media outlets and child safety advocates amplified the story nationally, presenting it as an example of Democratic priorities being misaligned with child protection.

Progressive outlets largely remained silent on the issue, unwilling to defend the vote but also reluctant to criticize Democratic leadership in Maine. This silence from typically vocal child safety advocates on the political left suggested discomfort with the Democratic position.

Several prominent child safety organizations that typically avoid partisan politics expressed concern about Maine’s failure to update its laws, though most were careful not to directly criticize the Legislative Council vote.

Constituent Pressure Builds

Reports indicate that Legislative Council members who voted against the bill received significant constituent communications expressing anger and confusion. Several Democratic legislators privately acknowledged that the vote created a public relations problem, even if they believed their procedural reasoning was sound.

The controversy puts Democratic legislators in a difficult position heading into the 2026 election cycle, when all 186 legislative seats will be on the ballot. Republicans have already indicated they will make this vote a campaign issue in competitive districts.

The Broader Context: AI Regulation and Privacy Rights

Balancing Child Protection with Privacy Concerns

The debate over AI-generated child sexual abuse material occurs within a larger context of how democracies should regulate artificial intelligence and balance competing values.

Privacy advocates have legitimate concerns about government overreach and surveillance in the name of child protection. The EU’s Chat Control proposal, which would have required mass scanning of all private communications, was rightly criticized as creating dangerous surveillance infrastructure that could be abused.

However, criminalizing the creation and possession of AI-generated child sexual abuse material is fundamentally different from mandating mass surveillance to detect it. States can ban this content—as they ban child pornography generally—while still requiring law enforcement to obtain warrants and follow proper investigative procedures.

The privacy vs. security debate should focus on how law enforcement detects illegal material, not on whether creating it should be illegal in the first place.

The Slippery Slope Fallacy

Some civil liberties advocates worry that banning AI-generated content could lead to broader restrictions on synthetic media, potentially impacting legitimate uses of AI in entertainment, education, and artistic expression.

This concern, while worth considering, represents a slippery slope fallacy. Society has successfully maintained distinctions between child pornography (banned) and other offensive content (protected) for decades. Adding AI-generated child sexual abuse material to the category of prohibited content doesn’t inherently threaten legitimate uses of AI any more than existing child pornography laws threaten legitimate photography.

Well-crafted legislation can distinguish between:

- AI-generated child sexual abuse material (prohibited)- AI-generated adult content with proper consent and age verification (potentially regulated)- AI-generated synthetic media for entertainment, education, or commentary (protected)

More than two dozen states have demonstrated that these distinctions can be codified in constitutional legislation.

Technology Advancing Faster Than Law

Maine’s situation exemplifies a common challenge in technology policy: law typically lags behind technological capability. When child pornography laws were first written, legislators didn’t envision artificial intelligence capable of generating photorealistic synthetic images.

This lag creates a predictable pattern:

- New technology enables new forms of harm2. Existing laws don’t clearly cover the new technology3. Criminals exploit the legal uncertainty4. Victims suffer while legislators debate5. Eventually, laws are updated

The question is how quickly this cycle completes. States that have already updated their laws moved through this cycle rapidly. Maine remains stuck in the debate phase while real children are being victimized.

The Federal-State Dynamic

While morphed images of children are already banned under federal law, federal agencies cannot investigate every case. The FBI and Department of Homeland Security focus on major producers and distributors, leaving most cases to state and local law enforcement.

When state law doesn’t criminalize AI-generated child sexual abuse material, local police and prosecutors cannot act even when they identify offenders. This creates a dangerous enforcement gap.

Lieutenant Richards’ testimony made this clear: he knows of cases but cannot investigate them under current Maine law. These aren’t hypothetical scenarios—they’re real situations happening right now.

The Political Calculus

Why Did Democrats Really Vote No?

While Democrats publicly cite concerns about duplication and procedure, several other factors may have influenced the vote:

Legislative Calendar Pressures: The second session has limited time, and Democratic leadership may have wanted to focus on other priorities they considered more important or politically beneficial.

Constitutional Liability Concerns: Despite multiple states successfully enacting similar laws, Maine’s Judiciary Committee may have been genuinely worried about legal challenges. A court striking down Maine’s law would create negative publicity and potentially harmful precedent.

Desire to Control the Process: By keeping the issue within the Judiciary Committee, Democratic leadership maintains greater control over the final language, timing, and political messaging around any legislation.

Avoiding a Republican Win: Admitting a bill would have given Republicans a procedural victory and claim credit for pushing the issue. Democrats may have preferred to advance their own version on their own timeline.

Legitimate Procedural Concerns: Some Democratic legislators may have genuinely believed that admitting multiple bills on the same topic would create confusion and waste limited committee resources.

The real answer likely involves a combination of these factors, with different Democratic legislators weighing them differently. However, none of these considerations change the fundamental reality: Maine children remain unprotected under current law.

The Republican Opportunity

For Republicans, this vote represents a gift from a political strategy standpoint. It allows them to:

- Question Democratic priorities: Why did naming bridges qualify as an emergency but protecting children didn’t?2. Create clear contrast: Republicans voted to protect children; Democrats voted no.3. Avoid complexity: Unlike many policy debates that involve trade-offs, this appears straightforward to voters.4. Appeal across party lines: Child protection is not inherently partisan, potentially attracting independent and moderate Democratic voters.5. Use Democratic disagreement: The fact that one Democrat (Gramlich) and the bill’s Democratic sponsor (Roeder) supported advancing it undermines party unity.

Republicans have already indicated they will feature this vote prominently in 2026 campaigns, particularly in competitive districts where Democratic legislators are vulnerable.

The 2026 Election Impact

Maine’s 2026 elections will be highly competitive. The governor’s mansion is open (Governor Mills is term-limited), and all 186 legislative seats are on the ballot. Democrats currently hold majorities in both chambers, but these margins could shift.

This vote provides Republicans with a powerful campaign message that doesn’t require complex policy explanations. Campaign ads writing themselves:

- “Democrats voted against banning AI child pornography”- “Five Democrats said no to protecting Maine children”- “When it came time to protect kids, Democrats chose procedure over safety”

Democrats will need to invest significant time and resources explaining their vote—time and resources they would prefer to spend on their own priorities. In close races, this issue could be decisive.

What Happens Next

January 2026: Round Two

When the Maine Legislature reconvenes in January 2026, Representative Kuhn has indicated she will reintroduce child protection provisions addressing AI-generated sexual abuse material. This legislation will presumably go to the Judiciary Committee, where Kuhn serves as House chair.

Both Kuhn and Shira Burns of the Maine Prosecutors’ Association have expressed confidence that legislation will pass in 2026. “We’re on the tail end of addressing this issue, but I am very confident that this is something that the judiciary will look at, and we will be able to get a version through, because it’s needed,” Burns stated.

However, several questions remain:

Will the constitutional concerns be adequately addressed? If not, Maine could face legal challenges similar to those that struck down overly broad federal provisions in Ashcroft v. Free Speech Coalition.

How will Republicans respond? They could choose to support Democratic legislation in good faith, or they could use the debate as an opportunity to continue criticizing Democratic handling of the issue.

Will Democrats move quickly? The second session has limited time. If Democrats prioritize other legislation, child protection measures could be delayed again.

Can Democrats contain the political damage? Even if legislation passes in January, Republicans will note that it only happened after public outrage and Republican pressure.

The Model Legislation Available

Maine doesn’t need to reinvent the wheel. More than two dozen states have already enacted legislation that has withstood constitutional scrutiny. These states provide model language that Maine could adapt, potentially including:

Iowa’s Approach (HF 2240): Criminalizes the creation of sexually explicit images of children using AI, with clear definitions that track federal case law.

South Dakota’s Framework (S 79): Bans AI-generated child sexual abuse material while including specific intent requirements that help address constitutional concerns.

California’s Comprehensive Model: Multiple bills addressing different aspects of AI-generated harm, including specific provisions for child protection.

Colorado’s Risk-Based Framework: The Colorado AI Act addresses high-risk AI systems, which could include those used to generate exploitative content.

By examining what has worked in other jurisdictions, Maine legislators could craft legislation that protects children while respecting constitutional limitations.

The Enforcement Challenge

Even after legislation passes, Maine will face implementation challenges. Lieutenant Richards noted his team can only investigate about 14% of the tips they receive annually due to resource constraints. Criminalizing AI-generated child sexual abuse material will add to this caseload.

Maine will need to:

- Train law enforcement on identifying AI-generated material- Provide forensic resources for digital evidence analysis- Coordinate with federal agencies on complex cases- Educate prosecutors on novel legal issues- Potentially increase funding for the Computer Crimes Unit

These operational considerations should not delay criminalizing the conduct, but they highlight that passing legislation is only the first step toward meaningfully protecting children.

The Lessons for Other States

Maine as a Cautionary Tale

Other states considering AI-related legislation can learn from Maine’s experience:

Lesson 1: Don’t Separate Constitutional Review from Drafting Maine’s mistake was allowing constitutional concerns to kill child protection provisions rather than using those concerns to refine the legislation. Constitutional analysis should inform drafting, not provide an excuse for inaction.

Lesson 2: Procedural Defenses Don’t Work for Child Protection Explaining to voters that you voted against child protection for procedural reasons is a losing political battle. If you believe legislation is needed but the current bill is flawed, vote yes and fix it in committee.

Lesson 3: Federal Precedent Provides Cover Since federal law already bans morphed images of children and federal courts have upheld these provisions, states can confidently legislate in this area without excessive constitutional anxiety.

Lesson 4: Act Before Law Enforcement Testifies They’re Powerless By the time police are publicly stating they cannot investigate known cases, you’ve waited too long. Proactive legislation prevents this embarrassing situation.

Lesson 5: Bipartisan Child Protection Works Better When child protection becomes partisan, everyone loses. States that have passed these protections with bipartisan support avoid the political circus Maine is experiencing.

The Fragmented U.S. Approach to AI Governance

Maine’s struggle highlights the broader challenge of fragmented AI regulation in the United States. Unlike the European Union, which has implemented a comprehensive AI Act applicable across all member states, the U.S. relies on a patchwork of state laws with varying requirements, thresholds, and enforcement mechanisms.

This creates several problems:

Compliance Complexity: Organizations operating in multiple states must navigate different requirements in each jurisdiction.

Regulatory Arbitrage: Bad actors can exploit states with weaker protections, knowing that digital content easily crosses state lines.

Innovation Uncertainty: Companies developing AI technologies face unpredictable legal landscapes that vary by location.

Enforcement Gaps: Coordination between state and federal agencies becomes more difficult when state laws differ significantly.

Constitutional Inconsistency: Some state laws may be struck down while others are upheld, creating confusion about what is permissible.

While some degree of state-level experimentation can be valuable, core child protection measures should be uniform across the country. Federal legislation addressing AI-generated child sexual abuse material would eliminate the current patchwork and ensure consistent protection.

The Technical Reality: This Problem Will Get Worse

The Trajectory of AI Capabilities

Lieutenant Richards testified that deepfakes have become dramatically more sophisticated in just two years. This trend will continue as AI models improve. Key technological developments on the horizon include:

More Accessible Tools: AI image generation is becoming easier to use, requiring less technical knowledge. User-friendly interfaces mean that creating synthetic images no longer requires programming skills or expensive software.

Higher Quality Output: Each generation of AI models produces more realistic images that are harder to distinguish from authentic photographs. Within a few years, synthetic images may be completely indistinguishable from real photos without forensic analysis.

Faster Generation: As processing power increases and models become more efficient, creating synthetic images takes less time and computational resources. What once required hours on powerful computers can now be done in seconds on a smartphone.

Lower Costs: The costs of accessing AI image generation continue to decrease. Open-source models and free web services mean virtually anyone can access these capabilities.

Video Synthesis: While current concern focuses primarily on still images, AI video generation is rapidly improving. Tools can already create realistic video deepfakes, and this capability will only become more sophisticated.

Real-Time Generation: Future systems may be able to generate synthetic content in real-time, enabling new forms of exploitation through live-streaming and video chat applications.

These technological trends mean that the problem will intensify regardless of what Maine does. The question is whether Maine’s laws will keep pace with technological capability.

The Arms Race: Detection vs. Generation

As AI generation improves, so do detection technologies. However, this creates an arms race between those creating synthetic content and those trying to identify it.

Current detection methods rely on:

- Artifacts and anomalies: AI-generated images often contain subtle imperfections that humans might miss but algorithms can detect- Metadata analysis: Examining how images were created and modified- Behavioral patterns: Identifying suspicious distribution patterns or account behaviors- Forensic techniques: Analyzing digital fingerprints that reveal manipulation

However, as detection improves, generation techniques evolve to evade detection. This cat-and-mouse game will continue indefinitely, with neither side maintaining a permanent advantage.

For law enforcement, this means even sophisticated detection will never eliminate the problem. Legal prohibitions remain necessary regardless of detection capabilities.

The Bigger Picture: What This Controversy Reveals

The State of Child Protection in America

The Maine controversy reflects broader tensions in how America approaches child protection in the digital age:

Competing Values: Americans value both child safety and civil liberties, and these sometimes create tension. The challenge is finding appropriate balances rather than treating these as binary choices.

Partisan Polarization: Issues that should be bipartisan increasingly split along party lines, often for reasons unrelated to the underlying policy merits.

Procedural vs. Substantive: American legislative processes can prioritize procedural correctness over substantive outcomes, sometimes losing sight of real-world impacts.

Technology Gap: Legislators, many of whom lack technical expertise, struggle to understand and regulate rapidly evolving technologies.

Resource Constraints: Even when laws exist, enforcement agencies lack resources to investigate every case, forcing difficult triage decisions.

Federal-State Coordination: The division between federal and state authority creates gaps that bad actors exploit.

These systemic challenges won’t be solved by any single piece of legislation, but they must be acknowledged and addressed in policy debates.

What True “Child Protection” Looks Like

Effective child protection in the digital age requires multiple simultaneous approaches:

- Criminal Prohibitions: Clear laws making it illegal to create, possess, or distribute exploitative material2. Enforcement Resources: Adequate funding for specialized units like the Computer Crimes Unit3. Technology Development: Investment in detection and forensic technologies4. International Cooperation: Coordination with other countries since digital content crosses borders5. Platform Accountability: Requirements for technology companies to detect and report illegal content6. Education and Prevention: Teaching children and parents about digital safety7. Victim Support: Resources for children who have been exploited8. Research and Data: Understanding the scope of the problem and effectiveness of interventions

Maine’s current debate focuses primarily on criminal prohibitions—a necessary but not sufficient component. Even after passing legislation, Maine will need to invest in these other areas to meaningfully protect children.

The Privacy-Security Balance Revisited

This controversy demonstrates that the privacy vs. security debate often involves false dichotomies. It is possible to:

- Ban AI-generated child sexual abuse material AND protect encryption- Give law enforcement tools to investigate crimes AND require warrants and oversight- Hold technology platforms accountable AND preserve free expression- Protect children from exploitation AND respect civil liberties for adults

The question is not whether to prioritize privacy or security, but how to advance both values simultaneously through thoughtful policy design.

Organizations working on digital privacy protection generally support criminalizing AI-generated child sexual abuse material, even as they oppose mass surveillance measures. This demonstrates that nuance is possible—and necessary—in these debates.

Conclusion: Where Maine Goes From Here

The January Test

When the Maine Legislature reconvenes in January 2026, all eyes will be on how lawmakers address this issue. Several potential outcomes exist:

Best Case Scenario: Democrats and Republicans work together to pass comprehensive legislation that criminalizes AI-generated child sexual abuse material with clear language that withstands constitutional scrutiny. The bill passes with strong bipartisan support, and both parties claim credit for protecting children.

Likely Scenario: Democrats introduce legislation through the Judiciary Committee. Republicans support it but continue to criticize Democrats for not acting sooner. The bill passes but with continued partisan tension around who deserves credit or blame.

Problematic Scenario: Constitutional concerns reemerge, causing delays or weakening of protections. The legislative session ends without comprehensive protections in place, extending Maine’s status as an outlier.

Worst Case Scenario: Partisan gridlock prevents any legislation from passing. Republicans use the issue as a campaign weapon while Democrats struggle to explain why they couldn’t deliver despite controlling both chambers.

The outcome will depend on whether legislators can set aside political considerations and focus on the substantive goal: ensuring Maine law enforcement can investigate and prosecute those who create and distribute AI-generated child sexual abuse material.

What Mainers Should Demand

Regardless of party affiliation, Maine residents should demand:

- Immediate Action: Legislation should be introduced and passed early in the January session, not delayed until the end.2. Clear Language: The bill should include clear definitions that distinguish prohibited content from protected speech, drawing on successful models from other states.3. Constitutional Rigor: The legislation should be drafted with input from constitutional scholars and the Attorney General’s office to withstand legal challenges.4. Adequate Resources: Appropriations should accompany the legislation to ensure the Computer Crimes Unit can enforce the new law.5. Transparency: The legislative process should include public hearings where law enforcement, prosecutors, civil liberties advocates, and parents can testify.6. Bipartisan Cooperation: Both parties should work together rather than using this issue for political point-scoring.7. Implementation Plans: The bill should include clear timelines for training law enforcement and developing enforcement protocols.

The Broader National Imperative

While Maine’s situation has unique political dynamics, the broader issue extends far beyond one state. As AI capabilities continue advancing, every jurisdiction must update its laws to address synthetic media used for exploitation.

This requires:

Federal Leadership: Congress should pass comprehensive federal legislation addressing AI-generated child sexual abuse material, providing a baseline that all states must meet or exceed.

International Cooperation: Since digital content crosses borders, international agreements and enforcement coordination become essential.

Technology Platform Accountability: Companies providing AI image generation services should implement safeguards to prevent misuse for creating exploitative content.

Research Investment: We need better data on the scope of AI-generated child exploitation and the effectiveness of different intervention approaches.

Public Awareness: Parents, educators, and children need information about these emerging risks and how to respond.

Final Thoughts

The October 23, 2025 Legislative Council vote represented a failure—not just of five Democratic legislators who voted no, but of a legislative system that allowed procedural considerations to trump substantive child protection needs.

The fact that this debate is happening at all in 2025, when more than two dozen states have already acted and the problem is rapidly escalating, demonstrates that Maine’s legislative process is not adequately responsive to technological change.

However, failures can be corrected. Maine has an opportunity in January 2026 to do what should have been done in October: pass comprehensive legislation that gives law enforcement the tools they need to protect Maine children from AI-generated sexual exploitation.

The question is whether Maine’s legislators—Democrats and Republicans alike—will rise to meet this moment or whether political considerations will continue to delay action while real children suffer real harm.

Lieutenant Richards will still be fielding calls from parents and prosecutors asking why Maine law doesn’t protect their children. The number of tips to his Computer Crimes Unit will continue increasing. Technology will keep advancing. The 1,325% increase in reports of AI-generated child sexual abuse material will likely worsen.

The only question is whether Maine’s laws will finally catch up to the reality its law enforcement is already confronting.

For the sake of Maine’s children, let’s hope the answer is yes.

Take Action

For Maine Residents:

- Contact your state legislators and demand action on AI-generated child sexual abuse material- Sign the Change.org petition calling for comprehensive child protection legislation- Attend public hearings when the Legislature reconvenes in January 2026- Educate yourself about protecting your family’s digital privacy

For Everyone:

- Learn about deepfakes and how they threaten privacy- Understand the global landscape of AI regulation- Review your state’s AI laws and determine if they adequately address these issues- Support organizations working on child protection and digital safety

Report Suspected Child Exploitation:

- National Center for Missing and Exploited Children: CyberTipline.org- FBI Internet Crime Complaint Center: IC3.gov- Local law enforcement

The technology exists. The threat is real. The only question is whether our laws will protect the most vulnerable among us.

About the Author: This article was researched and written for MyPrivacy.Blog, part of a network of cybersecurity and privacy publications including ComplianceHub.Wiki, Breached.Company, and ScamWatchHQ.com. We provide in-depth analysis of privacy, security, and compliance issues affecting individuals and organizations.

Last Updated: November 1, 2025

Related Reading

- The Looming Threat of Deepfakes: Navigating a World of AI-Generated Deception- Chat Control Defeated: How Europe’s Privacy Movement Stopped Mass Surveillance- US State AI Laws 2025: Colorado, Texas & California Comparison- Protecting Your Family’s Digital Privacy from ‘The Com’- Global AI Law Comparison: EU, China & USA Regulatory Analysis- 2025 State Privacy and Technology Compliance Guide