The British Army wants to make killing faster. Ethicists say we’re not asking the right questions.

🎧 Related Podcast Episode

Executive Summary

The UK Ministry of Defence has quietly awarded an £86 million contract to accelerate battlefield killing using artificial intelligence. Project Asgard—named, aptly, after the realm of the Norse god of war—aims to compress military decision-making from hours to minutes to seconds. What once required careful human judgment may soon be delegated to algorithms processing sensor data at machine speed.

This article examines what Project Asgard actually is, why ethicists and human rights organizations are deeply concerned, and—crucially—why anyone who cares about civil liberties should be paying attention. Because the same AI technologies being deployed on NATO’s eastern flank today have a troubling tendency to come home.

The £86 Million Question

On February 10, 2026, the UK Ministry of Defence announced what it called a routine procurement contract: £86 million to BlackTree Technologies, a small British defence firm, for something called the “Dismounted Data System.” The dry government press release spoke of “AI-capable radios, headsets and tablets” for soldiers.

Buried beneath the bureaucratic language was something rather more significant: the latest phase of Project Asgard, the British Army’s flagship initiative to build what officials describe as a “digital targeting web”—an AI-powered system designed, in the words of General Sir Roly Walker, to help the Army “double its lethality” and “exponentially reduce the time to see, decide, and strike.”

“What took hours, now takes minutes.”

That’s not an exaggeration. It’s the explicit goal.

The £86 million for BlackTree is just the latest installment. The total investment in this “digital targeting web” exceeds £1 billion. It’s already operational—deployed on NATO’s eastern flank in Estonia, tested during military exercises within striking distance of the Russian border.

And it raises a question that no one in government seems willing to answer: What does it mean to compress the decision to kill to seconds?

What Is Project Asgard?

To understand why this matters, we need to understand what Project Asgard actually is. Not the acronyms, the jargon, or the sanitized press releases—but the operational reality.

The Components

Project Asgard is not a single system. It’s an integrated “system of systems” designed to connect every battlefield sensor, every weapon platform, and every soldier into a single AI-powered network. Think of it as a military nervous system, with AI serving as the brain.

ComponentFunctionStatusDismounted Data System (DDS)AI-capable radios, tablets, and headsets for individual soldiers£86M contract awarded Feb 2026DART 250 Loitering MunitionJet-powered attack drone that triples current strike rangeDeployed and operationalMission Support NetworkAI-accelerated targeting and decision supportOperational in EstoniaDigital Targeting WebConnects all systems via AI for automated coordination£1B+ funding, full delivery by 2027

The Timeline

The speed of deployment is remarkable—and concerning:

- October 2024: Defence Secretary announces Project Asgard concept- January 2025: Contracts awarded- May 2025: Prototype deployed in Estonia during NATO Exercise Hedgehog- September 2026: DDS rollout to frontline units begins- 2027: Full capability delivery

From concept to operational deployment in eight months. For military bureaucracy, that’s warp speed. It’s also, as we’ll see, faster than the ethical frameworks needed to govern such systems.

The Contractor

The prime contractor, BlackTree Technologies, is a UK-based small-to-medium enterprise (SME) based in Tewkesbury, Gloucestershire. The company’s Managing Director, Neil Clements-Hill, described a “many years” relationship with the Army, suggesting this was less a competitive tender than an extension of an existing partnership.

The contract creates 12 jobs across Tewkesbury, Hereford, and Birmingham—a talking point for politicians, but hardly a transformative economic investment. The real significance lies not in job creation but in what the technology does.

The Kill Chain: What Are We Accelerating?

To understand Project Asgard’s significance, you need to understand a concept the military calls the “kill chain”—or, in more modern parlance, the “sensor-to-shooter” pipeline.

The Traditional Model

Historically, engaging a military target required a sequence of human decisions:

- Sense/Find — Detect and identify a potential target (a vehicle, a position, a person)2. Decide — Assess whether the target is legitimate, whether engagement is legal under the laws of war, whether the expected civilian harm is proportionate to the military advantage3. Strike/Act — Deploy the appropriate weapon system

Each step required human judgment. The “decide” phase, in particular, demanded that trained professionals consider context, weigh competing factors, and make moral choices. A tank in a field might be a legitimate target; a tank in a hospital parking lot requires very different calculus.

This process took time—often hours. And that, from a military perspective, was the problem.

Enter AI

Project Asgard is designed to compress this timeline through automation:

- Automated sensor fusion: AI systems aggregate data from radar, satellite imagery, signals intelligence, and ground sensors in real-time- Pattern recognition: Machine learning algorithms identify potential targets based on characteristics the AI has been trained to recognize- Recommendation engines: AI suggests the optimal weapon system (“shooter”) for each identified target- Network coordination: Data flows automatically between platforms, eliminating human communication bottlenecks

The result, as the US Center for Security and Emerging Technology has noted, is that small military teams could potentially make “1,000 tactical decisions an hour.”

One thousand decisions. Per hour. Decisions that may include who lives and who dies.

From Hours to Seconds

General Walker’s quote bears repeating: “What took hours, now takes minutes.”

But it’s not stopping at minutes. As The Conversation noted in its analysis of the UK’s Strategic Defence Review:

“These webs would be able to identify and suggest possible targets considerably faster than humans. In many cases, leaving soldiers with only a few minutes, or indeed seconds, to decide whether these targets are appropriate or legitimate.”

The military sees this as an advantage—operating faster than an adversary can react. The doctrine they reference is Colonel John Boyd’s “OODA Loop” (Observe-Orient-Decide-Act): whoever cycles through this loop faster wins.

But the laws of war were not written for machine-speed decision-making. And the humans nominally “in the loop” may have only seconds to approve or reject an AI’s targeting recommendation.

Can that still be called human control?

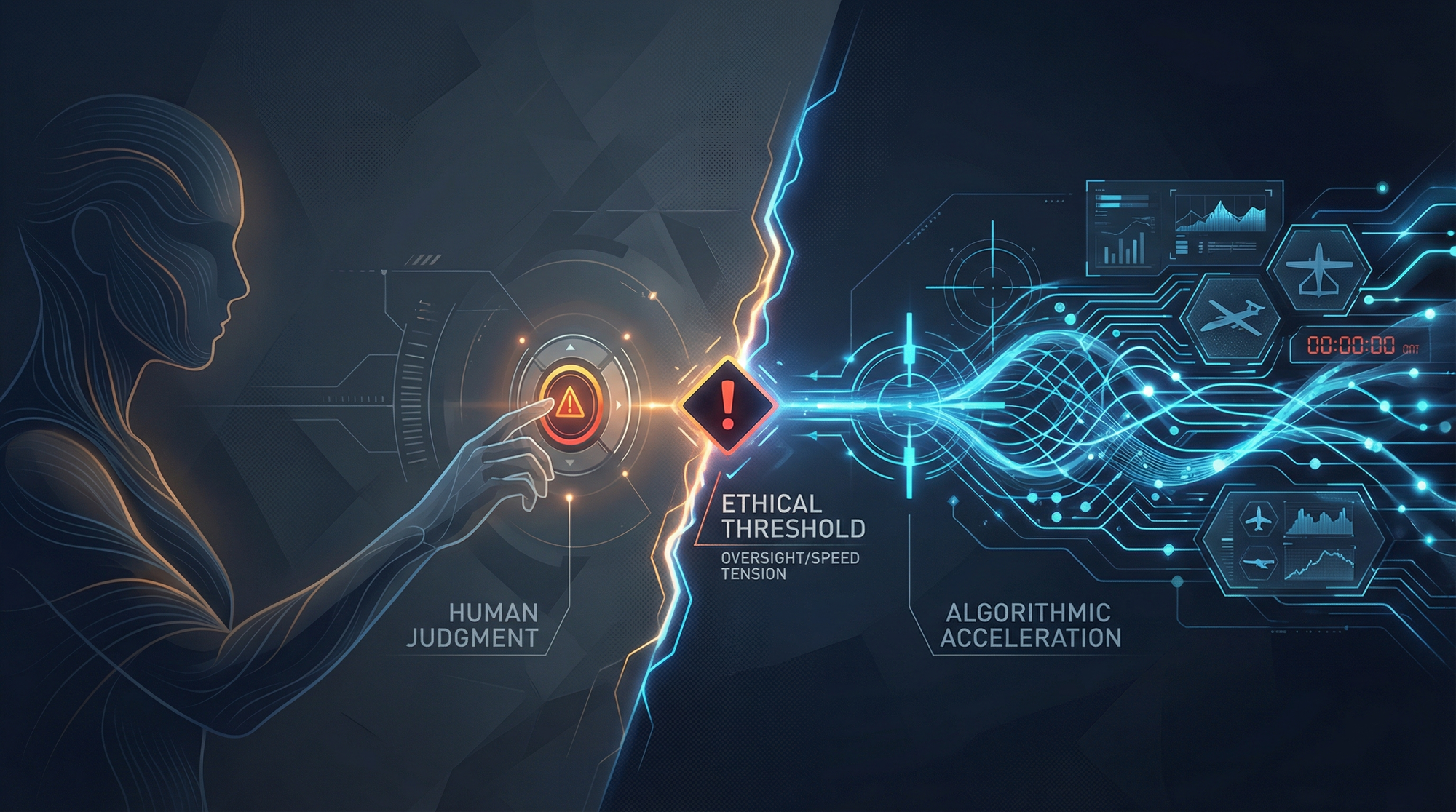

Speed Versus Humanity: The Fundamental Tension

Here is the crux of the ethical challenge: The entire purpose of AI targeting systems is to operate faster than humans can think.

The Meaningful Human Control Problem

International legal experts have coalesced around the concept of “meaningful human control” as the minimum requirement for lawful autonomous weapons systems. The principle is simple: a human being must make the decision to use lethal force, with sufficient information and time to make that decision meaningfully.

But what does “meaningful” mean when you have seconds?

The International Committee of the Red Cross (ICRC), which serves as guardian of international humanitarian law, has stated that autonomous weapons systems may leave “little room for human judgement” when targeting decisions happen at machine speed.

Consider the scenario: An AI system has processed hundreds of data streams, identified a target, assessed threat level, calculated collateral damage estimates, and recommended engagement—all in the time it takes you to read this sentence. A human operator sees a blinking icon on a screen. They have seconds to approve or reject.

That human has not observed what the AI observed. They cannot evaluate the AI’s reasoning (which, in machine learning systems, may be opaque even to the engineers who built it). They are being asked to trust the machine.

And studies consistently show that humans do trust machines—especially under stress. This phenomenon, known as “automation bias,” means operators tend to approve AI recommendations without proper scrutiny. The machine says shoot; the human clicks approve.

Is that meaningful control? Or is it the illusion of control—a human rubber-stamp on algorithmic killing?

The Cognitive Asymmetry

Here’s an uncomfortable truth: if humans genuinely deliberated on every AI targeting recommendation—taking the time to verify, question, and independently assess—the system would fail to deliver its promised speed advantage. The entire point is that humans can’t operate at machine speed.

This creates what researchers call a cognitive asymmetry. The AI processes information faster than humans can evaluate. The human becomes a bottleneck that must either slow the system down (negating its purpose) or become a mere formality (negating human control).

There is no technical solution to this dilemma. It is an inherent tension between speed and meaningful judgment.

What the Ethicists Are Saying

The ethical objections to AI-accelerated targeting are not the complaints of naive peaceniks who don’t understand military necessity. They come from some of the most respected voices in international humanitarian law, human rights advocacy, and academic ethics.

The ICRC Position

The International Committee of the Red Cross—the organization that has stewarded the Geneva Conventions since 1863—has issued increasingly urgent warnings about autonomous weapons systems.

Their position is carefully reasoned and legally grounded. The ICRC argues that certain autonomous weapons systems should be prohibited outright:

- Systems that are unpredictable in their effects- Systems designed to apply force against persons (as opposed to objects)

Their concern is not theoretical. It’s based on decades of experience with the laws of armed conflict and a clear-eyed assessment of what AI systems can and cannot do.

The ICRC notes that autonomous weapons systems would face “serious difficulties” in meeting the legal requirements for targeting: distinguishing combatants from civilians, assessing proportionality, and taking precautions to minimize civilian harm.

Human Rights Watch Analysis

Human Rights Watch (HRW) published a comprehensive report in April 2025 titled “A Hazard to Human Rights: Autonomous Weapons Systems and Digital Decision-Making.” Their analysis is damning.

“Autonomous weapons systems would instrumentalize and dehumanize their targets by relying on algorithms that reduce people to data points.”

This is more than rhetoric. HRW’s point is that AI systems necessarily operate by pattern matching—identifying targets based on features that can be quantified and processed. The warmth of a body. The shape of a silhouette. The pattern of movement.

What AI cannot assess is the full context of a human life. The civilian who picked up a combatant’s rifle to move it out of their child’s reach. The medic reaching into a bag that, to an algorithm trained on weapons profiles, looks threatening. The wedding party mistaken for a military convoy.

These are not hypothetical scenarios. They are the kinds of tragic errors that occur even with extensive human oversight. The question is whether removing that oversight—or reducing it to seconds—makes such errors more or less likely.

HRW’s conclusion: “There are obstacles to holding individual operators criminally liable for the unpredictable actions of a machine they cannot understand… the use of autonomous weapons systems would create an accountability gap.”

The Stop Killer Robots Coalition

Perhaps the most visible advocacy movement is the Campaign to Stop Killer Robots, a coalition of 274+ organizations across the globe united in calling for new international law to ensure “human control in the use of force.”

The coalition includes Amnesty International, Article 36, the Nobel Women’s Initiative, and dozens of national civil society organizations. It represents a remarkable breadth of concern—from peace groups to tech industry watchdogs to academic institutions.

Their message is simple: before these technologies become normalized, we need binding international rules that preserve human agency in life-and-death decisions.

Academic Voices

Neil Renic and Ingvild Bode of Queen Mary University of London have published extensively on the ethical implications of the UK’s AI warfare investments. Their analysis, published in The Conversation in response to the UK’s Strategic Defence Review, is worth quoting at length:

“Unlike other technologies used in war, AI is more than an instrument. It is part of a cognitive system of humans and machines, which makes human control a lot more complicated… It can instead lead to an erosion of moral restraint, creating a war environment where technological processes replace moral reasoning.”

This is the heart of the concern. It’s not just about whether AI makes targeting errors. It’s about whether the rush to AI-enabled warfare fundamentally changes the character of armed conflict—replacing the moral deliberation that has (imperfectly but meaningfully) constrained violence with algorithmic calculation that optimizes for “lethality.”

The Legal Vacuum: International Humanitarian Law in the Machine Age

The laws of armed conflict—codified in the Geneva Conventions and their Additional Protocols—were written with human decision-makers in mind. They impose obligations that assume a person is making the decision to attack.

Three Principles at Risk

Distinction: International humanitarian law requires combatants to distinguish between military targets and civilians at all times. This is not a simple classification problem; it requires contextual judgment. A farmer carrying a hoe may look, to certain sensors, like a combatant carrying a weapon. AI systems process patterns, not context.

Proportionality: Even when a target is legitimate, attacks must not cause excessive civilian harm relative to the anticipated military advantage. This requires a prediction about outcomes and a value judgment about acceptable cost. Can an algorithm meaningfully assess whether destroying a bridge is “worth” the three civilian lives likely to be lost? How does it weigh military advantage against human suffering?

Precaution: Those planning and conducting attacks must do everything feasible to verify that targets are military and to minimize incidental civilian harm. “Everything feasible” is a human standard—it implies effort, care, and moral responsibility. A machine cannot take precautions in any meaningful sense; it simply executes its programming.

The Article 36 Requirement

Under Additional Protocol I to the Geneva Conventions, states are required to conduct legal reviews of new weapons to determine whether they comply with international humanitarian law. This “Article 36 review” is supposed to happen before deployment.

Some states—notably Russia and the United States—argue that Article 36 provides sufficient discretion for states to determine compliance on their own. Critics argue that the mere act of human review is insufficient if the weapon system itself operates beyond human control once deployed.

The UK commits to conducting Article 36 reviews for new weapons systems. But the pace of Project Asgard’s deployment—concept to Estonia in eight months—raises questions about how thorough such reviews can be when operational urgency is paramount.

The Accountability Gap

Perhaps the most troubling legal issue is accountability. Under international criminal law, individuals can be held responsible for war crimes. But who is responsible when an AI system makes a targeting error?

- The soldier who clicked “approve” based on 3 seconds of information?- The commanding officer who authorized the use of the system?- The programmer who designed the algorithm?- The contractor who trained the model on potentially biased data?- The defence ministry that procured it?

Human Rights Watch calls this the “accountability gap”—a structural problem that may make war crimes effectively unprosecutable when AI systems are involved. If no one can be held responsible, what deters violations?

The UK’s “Dualist” Position: Having It Both Ways?

The UK government is not oblivious to these concerns. It has articulated a position on autonomous weapons that attempts to thread the needle between military capability and ethical responsibility.

The REAIM Declaration

In February 2026, just days before the BlackTree contract announcement, the UK signed the REAIM (Responsible AI in the Military Domain) declaration at a summit in the Netherlands. This voluntary commitment to “responsible” military AI was signed by 35 of the 85 participating countries.

Notably, the United States and China—the world’s two largest military powers—opted out.

The declaration is not legally binding. It establishes principles but creates no enforcement mechanisms. It is, in essence, a promise to be responsible without defining what responsibility means in practice.

The Official MOD Position

The UK Ministry of Defence published its Defence Artificial Intelligence Strategy in 2022, articulating four pillars:

- Effective — Battle-winning capability2. Efficient — Innovative technology for productivity3. Trusted — Safe, reliable AI with commitment to lawful/ethical use4. Influential — Shaping global AI development

The strategy commits to “lawful and ethical” AI use and promises bias mitigation, regular auditing, and suitable scrutiny. These are the right words.

But the same Strategic Defence Review that produced these commitments also set the goal of making the armed forces “ten times more lethal.” That’s not a metaphor—it’s an explicit operational objective.

The Tension

Here is the fundamental tension the UK has not resolved: The entire purpose of Project Asgard is to operate faster than human deliberation allows. The ethical commitments require meaningful human control. These two goals are in direct conflict.

You cannot promise both “exponentially reduced” decision times and meaningful human judgment. You cannot commit to “suitable scrutiny” when the system is designed to outpace scrutiny.

The UK’s position, in practice, appears to be: trust us. Trust that we will use these systems responsibly. Trust that humans will remain in control even as we engineer them out of the loop. Trust that our intentions are good.

That is not accountability. It is faith.

The Arms Race Context: We’re Not Alone

It would be unfair to criticize the UK in isolation. Project Asgard exists within a global context of accelerating military AI development.

US JADC2: $3 Billion and Counting

The US Department of Defense’s Joint All-Domain Command and Control (JADC2) initiative is the American equivalent of Project Asgard—albeit at vastly greater scale.

AspectDetailsConceptConnect every sensor, platform, and soldier across all domains (air, land, sea, space, cyber)Budget$3+ billion requested for 2023 aloneGoalCompress “sensor-to-shooter” timeline to machine speedApproach”Uber model” — AI automatically matches threats to optimal responders

The US approach explicitly embraces automation. One senior Pentagon official compared it to ride-sharing: the AI identifies a threat, finds the nearest available weapon system with the right capabilities, and automatically assigns the engagement.

This is not human control. This is logistics optimization applied to killing.

NATO’s Innovation Push

NATO has established multiple initiatives to accelerate military AI adoption:

InitiativePurposeInvestmentDIANADefence Innovation Accelerator for the North Atlantic20+ accelerator sites globallyNATO Innovation FundVenture capital for dual-use technology€1 billionData and AI Review BoardDevelop responsible AI principlesActive, developing standards

The €1 billion NATO Innovation Fund is particularly significant. It represents direct Alliance investment in the technologies that will power autonomous weapons systems—positioned as “dual-use” but with clear military applications.

The Five Eyes Integration

The UK is not developing these capabilities in isolation. The US Navy’s Project Overmatch has signed agreements with all Five Eyes allies (UK, Australia, Canada, New Zealand) for embedded development and interoperability.

This means UK systems must be compatible with US systems. And US systems are being designed for autonomous operation at scales that dwarf Project Asgard.

The UK’s ethical commitments exist within an Alliance framework where the largest partner has explicitly rejected the most restrictive interpretations of “meaningful human control.”

The Dual-Use Problem: From Battlefield to Beat

Here is where readers of myprivacy.blog should pay particularly close attention. Military AI technologies have a troubling history of coming home.

Historical Precedents

Consider the trajectory of other military technologies:

- Facial recognition: Developed for military identification purposes, now deployed by UK police forces for live surveillance in public spaces (see our analysis of key privacy risks associated with AI)- Predictive analytics: Born from military targeting concepts, now used in predictive policing algorithms that disproportionately impact minority communities- Surveillance drones: Originally battlefield reconnaissance platforms, now operated by UK police forces with minimal transparency- IMSI catchers: Military signals intelligence tools, now used by police to track mobile phones without warrants

The pattern is consistent: technologies developed for warfare—with the exceptional legal frameworks that apply during armed conflict—migrate to domestic law enforcement, where different rules (should) apply.

The AI Trajectory

The specific capabilities being developed under Project Asgard—pattern recognition, sensor fusion, automated decision support, predictive analytics—have obvious applications in domestic policing and surveillance.

- Pattern recognition: Identifying “threats” based on behavior profiles- Sensor fusion: Aggregating CCTV, mobile phone data, social media, and biometrics- Automated decision support: AI systems recommending police interventions- Predictive targeting: Identifying individuals likely to commit crimes

If this sounds like science fiction, it shouldn’t. These capabilities already exist in various forms. The question is whether military-grade systems—designed for speed and lethality—will follow the established migration path from foreign battlefields to domestic streets.

Tech Company Convergence

The line between military and civilian AI is already blurring at the corporate level. A recent analysis by the Perry World House at the University of Pennsylvania documented how:

“Meta, Google, Palantir, Anthropic, and OpenAI have partnered with the U.S. military and other U.S. allies (e.g., the United Kingdom, Israel)… with both Meta and Google quietly removing long-standing commitments to not use AI technology in weapons or surveillance.”

Palantir, which has extensive UK government contracts, explicitly operates in both the military and domestic policing domains. The same company providing targeting support to military operations provides predictive policing tools to civilian law enforcement.

The Alarm from Rights Groups

Human Rights Watch explicitly warned about this trajectory:

“Autonomous weapons systems present numerous risks to humanity… The threats they pose are far reaching because of their expected use in law enforcement operations as well as during armed conflict.”

The ICRC has similarly noted:

“As an intrinsically dual-use and repurposable technology, AI systems are frequently employed by different state and non-state actors, which escalates concerns about their impact in the broader peace and security domain.”

Without clear legal barriers—legislation that explicitly prohibits the domestic deployment of military-grade AI decision systems—the migration from battlefield to beat is not a question of if, but when.

The Bias Problem Compounds

If military AI migrates to domestic use, it brings its biases with it.

Studies have consistently documented that facial recognition systems—the same pattern recognition technology at the heart of targeting systems—perform poorly on people with darker skin. False positive rates can be 10 to 100 times higher for Black faces than white faces.

In warfare, this might mean misidentifying a civilian as a combatant. In policing, it means wrongful stops, wrongful arrests, and the reinforcement of existing racial disparities in the criminal justice system.

The UK has already seen concerning developments:

- Reports of UK police using passport databases for facial recognition searches- Expansion of live facial recognition deployment in police vans- The Information Commissioner’s Office demanding clarity on police facial recognition bias

Project Asgard’s pattern recognition systems are being trained on data from military contexts. That training data reflects military judgments about what constitutes a “threat”—judgments made in environments where the baseline assumption is hostility, where profiles are based on combatant characteristics, where error costs are calculated in military rather than civilian terms.

Those assumptions, those biases, those error tolerances—if they migrate to domestic policing, they migrate to contexts where the stakes and standards should be fundamentally different.

What Citizens Should Demand

If you’ve read this far, you may be wondering: what can actually be done?

The trajectory we’re describing—AI-accelerated warfare, erosion of human control, dual-use migration to domestic surveillance—is not inevitable. It is the result of choices. And choices can be changed.

Transparency

The first demand is the most basic: we have a right to know.

- What are the specific operational rules governing AI targeting decisions in Project Asgard?- What is the minimum time allowed for human review before AI-recommended strikes are executed?- How are “meaningful human control” requirements operationalized in practice?- What testing and auditing has been conducted, and by whom?- What happens when the system makes errors?

None of this information is publicly available. The UK government speaks of “responsible AI” without specifying what that means operationally. The military invokes “security” to avoid scrutiny of decisions that implicate fundamental legal and ethical principles.

Secrecy is not security. Secrecy is a shield for decisions that cannot withstand public examination.

Parliamentary Oversight

The UK Parliament has mechanisms for defence oversight—most notably, the Defence Select Committee. But oversight is only effective if it is informed, independent, and taken seriously.

Citizens should demand:

- Detailed parliamentary hearings on autonomous weapons policy- Publication of Article 36 legal reviews for AI targeting systems- Independent technical audits of system capabilities and limitations- Regular reporting on operational use of AI in targeting decisions

Domestic Legal Barriers

To prevent military AI migration to policing, we need explicit legal prohibitions:

- Legislation barring the deployment of military-grade AI decision systems for domestic law enforcement- Mandatory human decision-making for any police use of force- Strict limits on data-sharing between military and police AI systems- Independent oversight of any AI systems used in policing

These barriers do not exist today. The Investigatory Powers Act regulates some surveillance capabilities, but the regulatory framework for AI in policing remains fragmented and inadequate.

International Engagement

The UK’s “dualist” position on autonomous weapons—ban some, regulate others—is a reasonable starting point for international negotiations. But those negotiations have stalled, in large part because major military powers (US, China, Russia) see advantage in the absence of rules.

Civil society pressure can matter. The international campaign against landmines succeeded because public opinion made continued use politically costly. The same can happen with autonomous weapons—but only if people understand what’s at stake.

The Precedent Being Set

Here is the final, uncomfortable truth: what the UK does with Project Asgard matters beyond British borders.

The UK is a permanent member of the UN Security Council. It is a leading member of NATO. It has historically positioned itself as a champion of international humanitarian law—a country that follows the rules even when rules are inconvenient.

If the UK races to deploy AI targeting systems with minimal human control, it sets a precedent. It signals to other countries—democracies and autocracies alike—that speed matters more than scrutiny, that lethality outweighs legality, that the competitive advantage of killing faster justifies the erosion of human judgment.

Other countries will follow. They will point to UK systems, to NATO interoperability requirements, to the “Western standard” that has been set. And the restraints that have (imperfectly) governed armed conflict for decades will erode not through explicit rejection, but through technological obsolescence.

The time to establish norms is now—before AI targeting becomes the default, before the “meaningful human control” debate becomes academic, before the precedent hardens into practice.

Conclusion: The Choice We Face

£86 million is not a lot of money in defence terms. It’s a rounding error compared to the UK’s total military budget. BlackTree Technologies is a small company; the 12 jobs created are economically trivial.

But Project Asgard represents something more than its contract value. It represents a choice about what kind of warfare we are willing to accept—and, by extension, what kind of surveillance and policing we may inherit.

The choice is not between AI and no AI. That debate is over; AI will be integrated into military systems. The choice is between AI systems designed with human control as a genuine requirement versus AI systems where human control is a legal fiction—a box to check, a button to click, a formality between algorithmic recommendation and lethal execution.

The UK’s stated position is that it wants both: AI speed and human control, doubled lethality and ethical integrity. But these commitments pull in opposite directions. At some point, the tension must be resolved.

The military will resolve it in favor of capability—that is its institutional imperative. The question is whether civil society, parliament, and the courts will impose countervailing requirements: transparency, accountability, genuine human control, and legal barriers against domestic migration.

The Norse god Odin, ruler of Asgard, gave up an eye to gain wisdom. The question is what the UK is willing to give up in its pursuit of AI-enabled lethality—and whether we will recognize the cost until it is too late to pay it back.

Key Data Points

MetricValueSourceDDS Contract Value (BlackTree)Up to £86MUK GOV.UK, Feb 2026Total Digital Targeting Web Investment£1B+UK MOD, 2025Time from Asgard concept to deployment8 monthsUK MOD, 2025Countries signing REAIM declaration35 of 85Reuters, Feb 2026Countries NOT signing (notable)US, ChinaReuters, Feb 2026Stop Killer Robots coalition members274+SKR Website, 2026Countries supporting LAWS treaty negotiations129+HRW, 2025US JADC2 budget request (2023)$3B+DefenseScoopNATO Innovation Fund€1BNATO, 2022UK Defence SME spending target£7.5B annuallyUK MOD, by 2028

Sources & References

- UK Government. “Soldiers to get new AI capable radios, headsets and tablets with futuristic sensor data.” GOV.UK, February 2026.2. The Register. “British Army splashes $86M on AI gear.” February 10, 2026.3. UK MOD Defence Equipment & Support. “DES helps deliver new lethal digital-targeting web system to soldiers.” July 2025.4. British Army. “New technology unveiled that will increase British Army lethality.” 2025.5. GovFacts. “The Pentagon’s Gamble: How JADC2 Aims to Revolutionize Modern Warfare.” June 2025.6. NATO. “Emerging and Disruptive Technologies.” 2025.7. International Committee of the Red Cross. “Autonomous Weapons.” ICRC Position Paper, 2025.8. Human Rights Watch. “A Hazard to Human Rights: Autonomous Weapons Systems and Digital Decision-Making.” April 2025.9. Lieber Institute, West Point. “National Positions on LAWS Governance.” February 2025.10. ArXiv. “AI-Powered Autonomous Weapons Risk Geopolitical Instability.” 2024.11. Reuters. “US, China opt out of joint declaration on AI use in military.” February 5, 2026.12. The Conversation. “Britain’s plan for defence AI risks the ethical and legal integrity of the military.” June 2025.13. UK MOD. “Defence Artificial Intelligence Strategy.” June 2022.14. Stop Killer Robots. Campaign Coalition Website. 2026.15. Perry World House, University of Pennsylvania. Analysis of tech company military partnerships. 2025.