🎧 Related Podcast Episode

Executive Summary

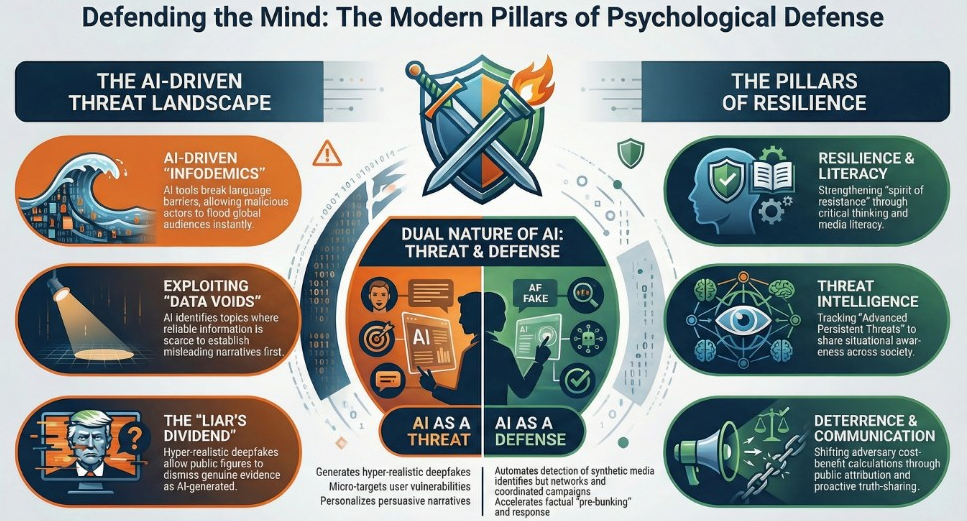

This briefing document synthesizes key insights regarding the contemporary landscape of psychological defence and malign information influence. In an era of rapid technological advancement and shifting geopolitical alliances, the resilience of democratic societies depends on the ability of individuals and institutions to withstand sophisticated manipulation.

Key Takeaways:

- Technological Acceleration: Artificial Intelligence (AI) has globalized disinformation by breaking language barriers and identifying “data voids”—areas lacking reliable information—to exploit with tailored, automated content.- Cognitive Exploitation: Disinformation campaigns bypass analytical reasoning (System 2 thinking) by targeting intuitive, emotional responses (System 1 thinking), utilizing cognitive biases like confirmation bias and the availability heuristic.- New Battlegrounds: Video games and gaming-adjacent platforms (e.g., Discord, Steam) have emerged as critical domains for propaganda, recruitment, and the dissemination of hyper-realistic deceptive content.- The Swedish Model: Sweden’s “Total Defence” system integrates military and civil dimensions, emphasizing that psychological defence is a whole-of-society responsibility rooted in citizen resilience and the “spirit of resistance.”- Defensive Strategy: Effective democratic defence relies on transparency, Media and Information Literacy (MIL), and “counter-speech” rather than censorship, which is fundamentally prohibited in democratic frameworks.

Download: Psychological_defence_TGA Psychological_defence_TGA.pdf2 MB.a{fill:none;stroke:currentColor;stroke-linecap:round;stroke-linejoin:round;stroke-width:1.5px;}download-circle

I. The Technological Landscape of Modern Disinformation

AI-Driven Linguistic and Narrative Reach

AI technologies have fundamentally transformed the scale and speed of influence operations.

- Globalized Reach: AI translation tools dismantle language barriers, allowing malicious actors to distribute disinformation across diverse linguistic groups simultaneously, potentially leading to an “AI-driven infodemic.”- Data Voids: AI algorithms analyze online interactions to identify “data voids”—topics where credible information is scarce. Malicious actors exploit these gaps to establish misleading narratives before factual content can be produced.- Tailored Misinformation: By analyzing user data on social media, AI identifies psychological vulnerabilities to craft personalized messages that resonate deeply with specific audiences, making falsehoods appear more palatable.

Generative AI and the “Liar’s Dividend”

The rise of generative models introduces new risks to information integrity:

- Deepfakes: Hyper-realistic synthetic audio and video are weaponized to harass individuals or create false narratives about public figures.- The Liar’s Dividend: The mere existence of deepfakes allows public figures to dismiss genuine, incriminating evidence as AI-generated, capitalizing on growing public skepticism of all media.- Automated Personas: Advanced chatbots engage users in conversation to spread misinformation or conduct “character hacking.”

II. The Emotional and Cognitive Architecture of Influence

Exploiting Human Cognition

Influence operations target “System 1” thinking—the brain’s fast, emotional, and intuitive processing—to bypass “System 2” analytical evaluation.

Cognitive Mechanism

Impact on Perception

Confirmation Bias

Favors information that aligns with pre-existing beliefs, entrenching polarization.

Availability Heuristic

Overestimates the likelihood of threats based on vivid or emotionally charged imagery.

Loss Aversion

Frames social or political issues as existential threats to trigger defensive or risk-seeking reactions.

The Framing Effect

Manipulates decision-making based on how information is presented rather than the facts themselves.

Illusion of Truth

Uses repeated exposure to a message to enhance its perceived credibility (the “mere exposure effect”).

The Power of Viral Emotions

Emotions such as fear and anger are the primary drivers of viral disinformation.

- Affective Polarization: Anger fuels hostility toward “outgroups,” making individuals more likely to believe and share derogatory fake news about political opponents.- Rhetorical Pathos: Disinformation prioritizes emotional appeals (pathos) over rational argument (logos), making false claims “feel” right to the target audience.- Algorithmic Amplification: Social media algorithms prioritize content that triggers strong emotional reactions, creating a distorted perception of reality where outrage spreads faster than neutral facts.

III. Digital Frontiers: Gaming and Video Games

Video games have evolved into active battlegrounds where reality is contested and users are targeted in immersive environments.

- Reframing Reality: Footage from realistic games (e.g., ARMA 3) is often presented as real-world combat footage from conflicts in Ukraine or Gaza to spread disinformation.- In-Game Propaganda: Malicious actors create “mods” (modifications) for popular games like Roblox to push specific political narratives or simulate attacks.- Unmoderated Communities: Platforms like Discord and Steam provide semi-private spaces for extremist recruitment and the leaking of classified documents (e.g., the 2023 Pentagon document leak on a Minecraft server).- Soft Power and Censorship: Authoritarian regimes use their market power to pressure developers into self-censoring content that contradicts national narratives, such as removing LGBTQ+ representation.

IV. The Swedish Framework: Total Defence and Resilience

The Total Defence Concept

Resumed in 2015, Sweden’s “Total Defence” is a whole-of-society approach comprising military and civil dimensions.

- Military Defence: Focused on territorial integrity and upholding national interests.- Civil Defence: Aimed at protecting the civilian population and ensuring essential societal functions (e.g., energy, food supply, and health care).- The Duty of Total Defence: Everyone living in Sweden aged 16 to 70 is subject to total defence service, which includes military conscription, civilian service, and general compulsory national service.

Citizen Resilience and the “Spirit of Resistance”

The ultimate guarantee of democracy is an informed and critical citizenry.

- Resource and Commitment: Resilience is built on education (civic skills), trust (in institutions and quality media), and networks (the willingness of citizens to help one another).- Spirit of Resistance: This refers to the mental readiness of individual citizens to take responsibility in a crisis. It is rooted in the belief that one’s way of life is worth defending.- Proactive Information: The brochure “If Crisis or War Comes” is distributed to all households to raise awareness of personal responsibility in the face of disruption or disinformation.

V. Strategies for Democratic Defence

Countering Disinformation Without Censorship

Democratic societies must defend themselves using methods that do not undermine their own values.

- Prohibition of Censorship: Swedish law and the European Convention on Human Rights generally prohibit prior restraint (censoring information before publication).- Counter-Speech: Authorities have the right and obligation to provide factually correct information to counter inaccurate claims related to their area of activity.- Media and Information Literacy (MIL): Developing citizens’ critical thinking and source criticism skills is the most effective long-term protection against manipulation.- Strategic Inoculation (“Prebunking”): Disclosing intelligence about anticipated disinformation campaigns before they take root prepares the public to interpret hostile narratives critically.

The Role of Intelligence and OSINT

- Secret Intelligence: The Swedish model focuses on “secret intelligence” as a complementary resource for decision-makers, rather than providing definitive, consolidated judgments.- OSINT (Open-Source Intelligence): The “demonopolization” of intelligence has empowered civil society actors (e.g., Bellingcat) to investigate subjects of public interest.- OSINT Credibility: While independent OSINT actors can be more credible public messengers than government agencies, they may lack formal tradecraft and are susceptible to “sensationalist analyses” driven by the need for online attention.

VI. Case Study: The LVU Disinformation Campaign

The campaign against Swedish social services (LVU) illustrates how emotional architecture drives virality:

- Narrative: Spread claims that Swedish authorities were “kidnapping” Muslim children for assimilation.- Emotional Trigger: Tapped into primal parental fears for children’s safety.- Visual Feedback Loop: Used videos of crying children and distressed parents to evoke immediate sympathy and outrage.- Impact: Exploited “us versus them” dynamics, leading to radicalization and threats against social workers, making factual refutations from authorities difficult for the target audience to hear.[END OF DOCUMENT]