MAJOR UPDATE: YouTube has been caught in what could be one of the most expensive lies in social media history. After falsely terminating over 5 million creator channels using AI automation and categorically denying that AI was involved in the termination and appeals process, overwhelming evidence has exposed the platform’s deceptive practices.

The stakes? Under the FTC Act Section 5 (15 U.S.C. §45), deceptive acts or practices are illegal. If the Federal Trade Commission finds YouTube violated Section 5, the platform could face civil penalties of up to $53,088 per violation as of 2025—and with millions of affected creators, the potential fines could reach into the billions.

The Scandal Unfolds: 5 Million Channels Terminated

The Mass Termination Event

Starting in early November 2025, YouTube began what can only be described as a purge of creator channels. The numbers are staggering:

- Over 5 million channels terminated (YouTube’s own data shows 4.8 million channels removed and 9.5 million videos deleted)- Hundreds of thousands of appeals filed by legitimate creators- Appeals rejected in under 60 seconds—some as fast as 2-5 minutes- Trending on X (Twitter) for four consecutive days as creators shared their stories

The terminations targeted creators across all categories:

- Educational content creators making documentaries- Fitness instructors and health coaches- Tech reviewers and tutorial channels- Gaming streamers with hundreds of thousands of subscribers- Small businesses using YouTube for marketing

Real Creator Stories

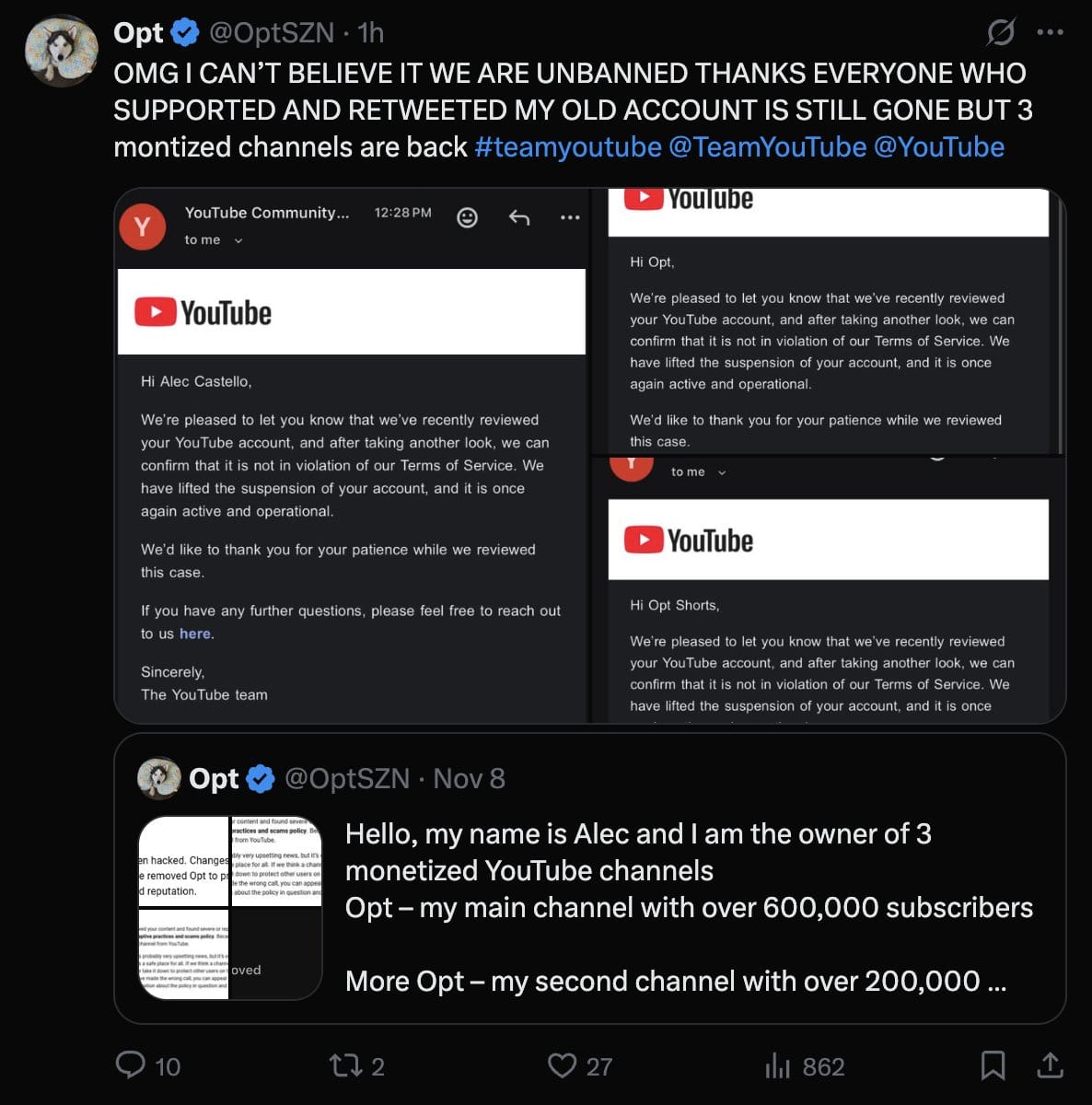

Enderman, a tech YouTuber with a nine-year-old channel and over 380,000 subscribers, had his account terminated without warning. His appeal was denied almost immediately. YouTube’s automated system allegedly linked his channel to another terminated account—a connection he says doesn’t exist and that no human reviewer actually verified.

Caleb C and countless other creators reported submitting detailed appeals explaining their content, only to receive rejection notices in less than one minute. The math doesn’t work: even a cursory human review of hours of video content would require significantly more time.

One creator making economic and fitness documentaries reported:

“My channels are still falsely terminated and haven’t been restored. I just make economic and fitness documentaries—a human would know if they checked. But YouTube never checked.”

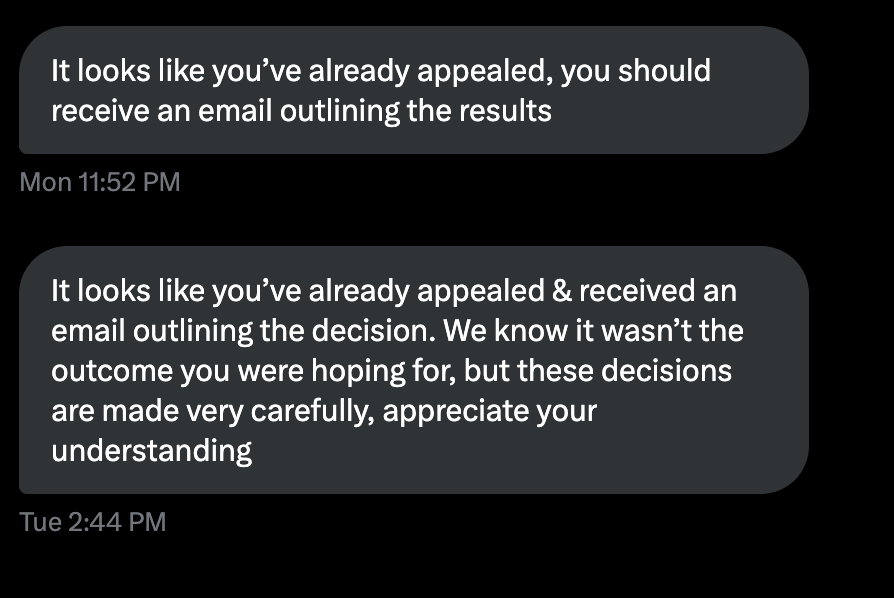

The Lie: “Appeals Are Manually Reviewed”

YouTube’s Public Claims

On November 8, 2025, as the controversy reached a fever pitch on social media, @TeamYouTube made a categorical public statement:

“Appeals are manually reviewed so it can take time to get a response.”

This claim was repeated across multiple platform communications:

- Appeals are handled by “human staff”- “Manual review” processes are in place- No AI involvement in termination or appeals decisions

The Reality: AI Automation at Every Stage

The evidence proving YouTube’s deception is overwhelming:

1. Sub-Minute Appeal Rejections

Creators documented appeal submissions and rejections with timestamps showing:

- Appeals submitted with detailed explanations- Rejection notices received 2-5 minutes later- Some rejections arriving in under 60 seconds

To put this in perspective: even if a reviewer watched content at 2x speed, they couldn’t possibly review hours of video content, read appeal explanations, cross-reference policies, and make a decision in under a minute.

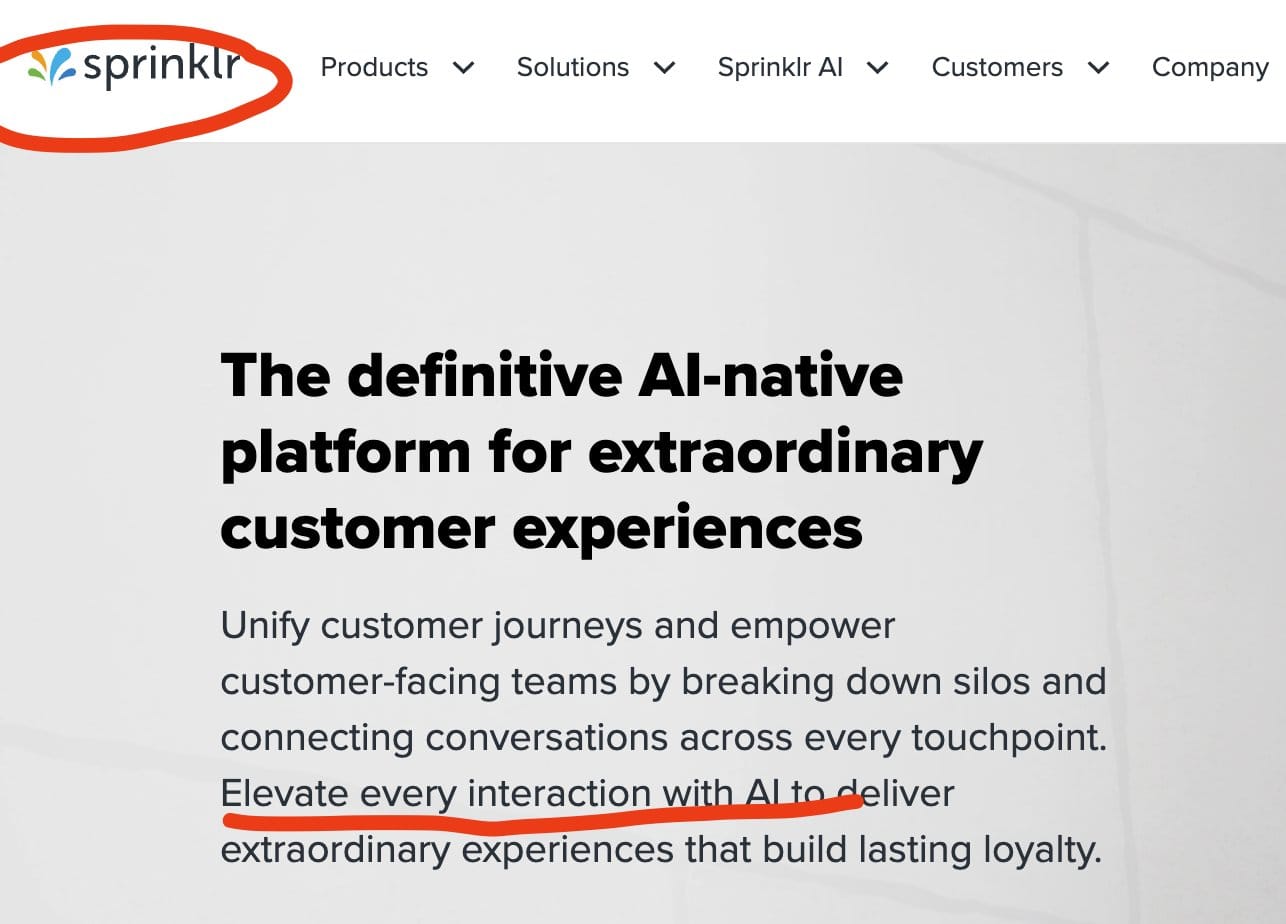

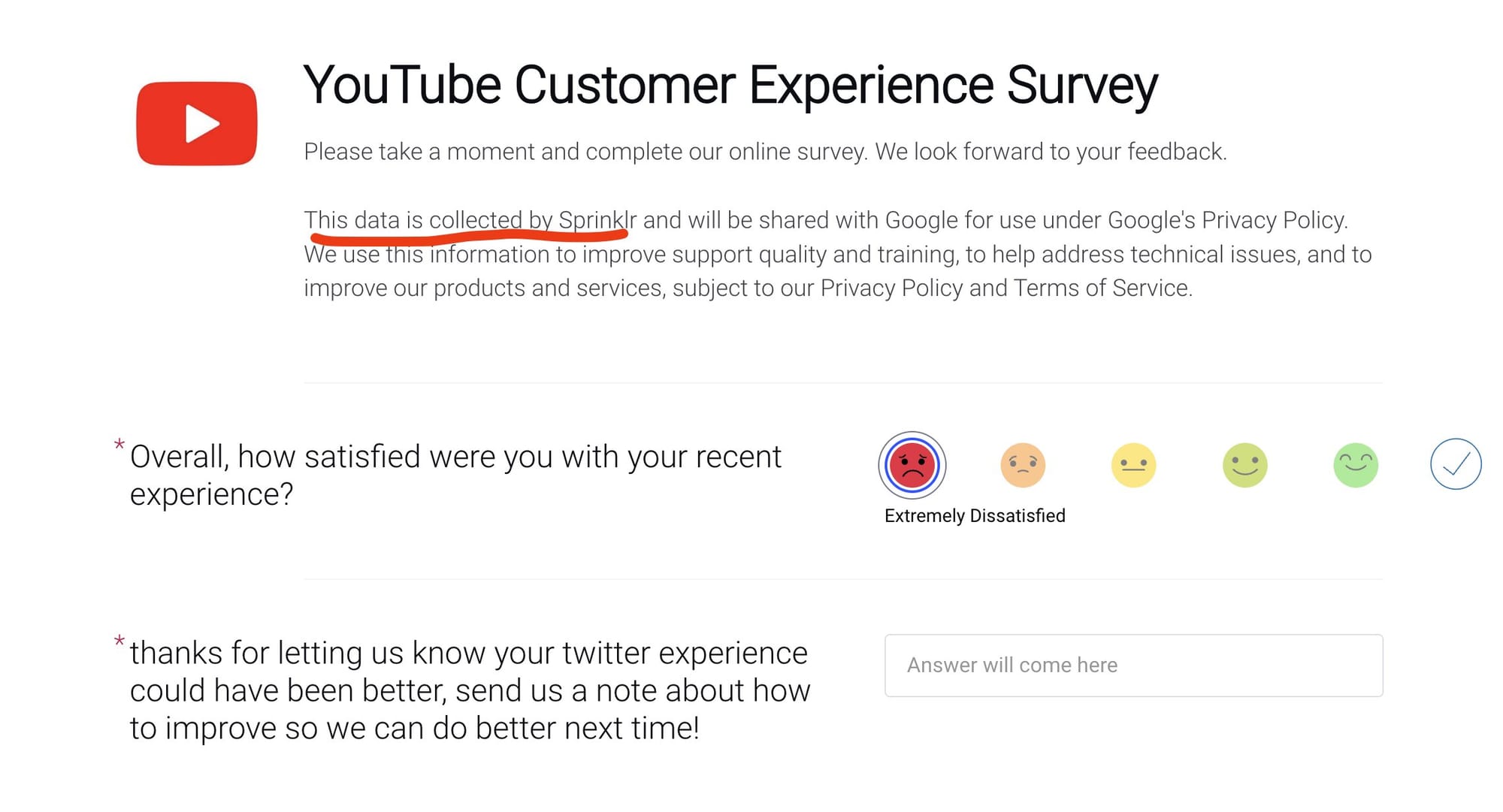

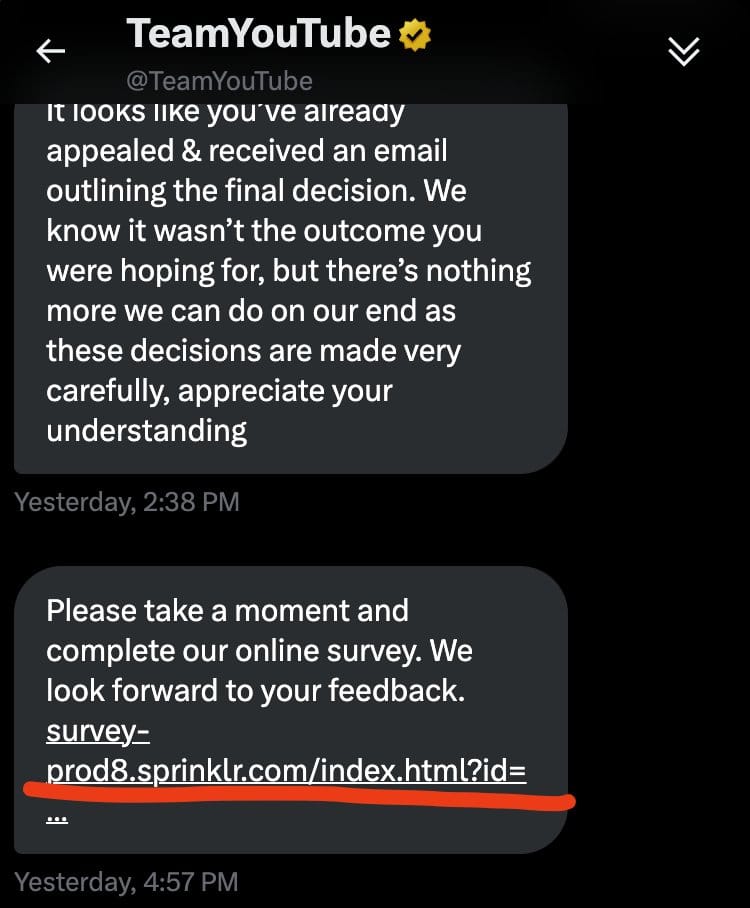

2. The Sprinklr Smoking Gun

This is where YouTube’s lie became undeniable. Creators who analyzed their communication metadata discovered that @TeamYouTube’s “manual” responses were coming from Sprinklr—an AI-powered automated customer service platform.

What is Sprinklr?

- AI-native customer experience platform specializing in automated messaging- Handles 99% of inquiries with bots in some implementations- Uses AI agents to generate responses based on customer relationship data- Provides “suggested responses” that appear personalized but are AI-generated- Operates at scale across social media, email, chat, and messaging platforms

One creator received a survey from Sprinklr after their appeal was rejected, confirming the automated nature of the entire process.

3. Automated Follow-Up Messages

Perhaps most damning: creators reported receiving automated rejection messages without sending new appeals:

“I didn’t send any new messages but got an automated reply that my appeal was rejected. The AI just kept responding based on my channel link, not any actual human review.”

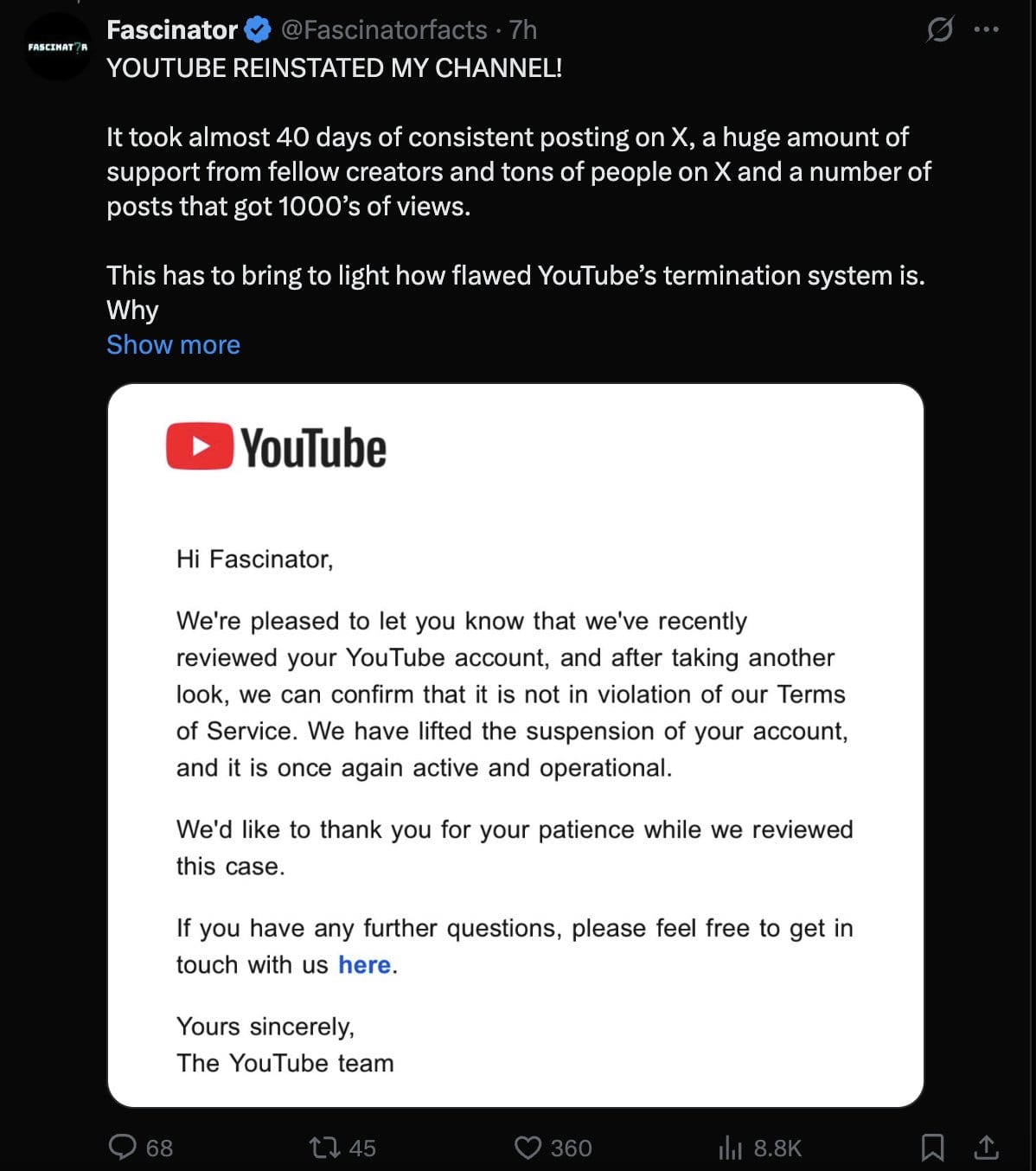

YouTube’s Partial Admission: Too Little, Too Late

November 10, 2025: The First Crack

After days of mounting pressure, YouTube admitted to using AI for handling creator appeals and customer support on November 10, 2025. But the admission came with heavy spin:

YouTube claimed Sprinklr was:

- “Primarily used as a Customer Relationship Management (CRM) tool”- “A message routing system”- Providing agents with “pre-approved templates or snippets”- Not actually making decisions, just “speeding up responses”

The problem? This explanation doesn’t account for:

- Appeals rejected in under 60 seconds (no time for human review even with templates)- Automated messages sent without creator input- The sheer volume of appeals processed at impossible speeds- Creators never getting responses from actual humans, even after multiple appeals

November 13, 2025: Doubling Down

On November 13, 2025, YouTube released a “comprehensive statement” that essentially doubled down:

- Reviewed “hundreds” of social media posts (out of hundreds of thousands of complaints)- Upheld “the vast majority of termination decisions”- Claimed “no bugs or known issues existed with its systems”- Overturned only “a handful” of cases

Translation: YouTube reviewed a tiny fraction of appeals, found their AI system worked exactly as programmed (to terminate channels and reject appeals automatically), and called it a day.

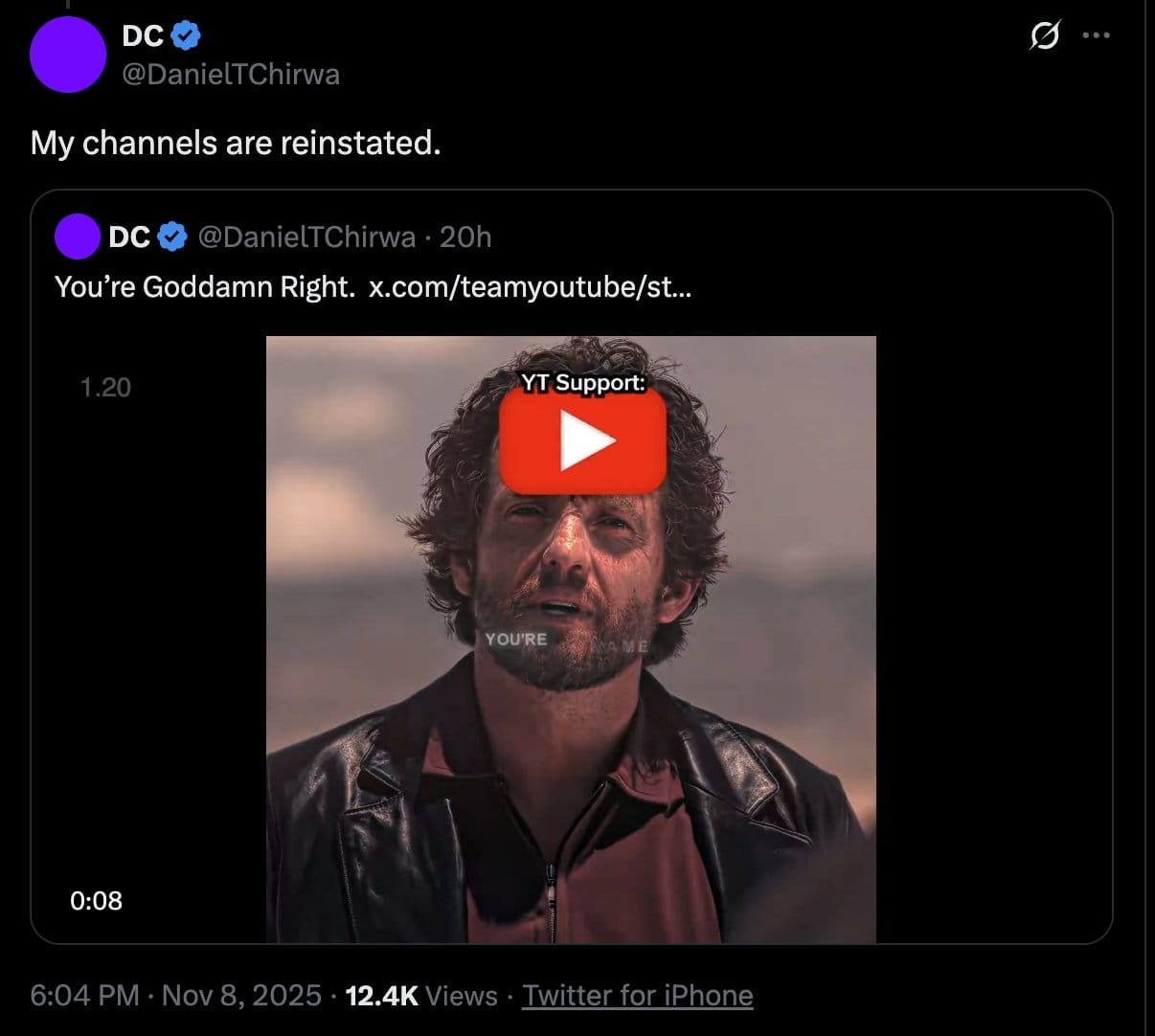

The Shift to “Actual Humans”

Recently, something changed. Creators began noticing that @TeamYouTube responses on X started sounding… human. The automated, template-like responses gave way to more nuanced, personalized replies.

Why the sudden change?

The most likely explanation: legal exposure. Once the extent of the automated deception became public, YouTube likely received urgent advice from their legal team to start involving actual humans—at least in visible public communications.

But for millions of terminated creators, the damage is done. Their channels remain terminated. Their appeals remain rejected. And YouTube’s AI continues to guard the gates.

The Legal Implications: FTC Act Section 5

What Constitutes a Deceptive Practice?

Under FTC Act Section 5 (15 U.S.C. §45), the Federal Trade Commission has authority to prevent “unfair or deceptive acts or practices in or affecting commerce.”

A practice is considered deceptive if:

- There is a representation, omission, or practice that is likely to mislead consumers2. The consumer’s interpretation is reasonable under the circumstances3. The misleading representation is material (likely to affect consumer decisions)

How YouTube’s Actions Qualify

YouTube’s statements about manual review processes meet all three criteria:

1. Misleading Representation:

- YouTube explicitly stated appeals are “manually reviewed” by “human staff”- This representation was made publicly, repeatedly, on official channels- The actual process was AI-automated via Sprinklr

2. Reasonable Consumer Interpretation:

- Creators reasonably believed their appeals would receive human review- They invested time writing detailed explanations assuming a human would read them- They expected a fair process based on YouTube’s representations

3. Material to Consumer Decisions:

- Creators made decisions about whether and how to appeal based on these representations- The belief in human review affected how creators structured their appeals- Many creators might have pursued different legal or business strategies had they known appeals were AI-automated

The Penalty Structure

As of January 17, 2025, the FTC updated civil penalty amounts for inflation:

For violations of Section 5(m)(1)(A) (knowing violation of rule respecting unfair or deceptive acts or practices):

- $53,088 per violation (increased from $51,744 in 2024)

For violations of Section 5(m)(1)(B) (knowing violation of cease and desist order):

- $53,088 per violation

Calculating Potential Exposure

The penalty exposure depends on how “violations” are counted:

Conservative Calculation (Per False Statement): If each public false statement about manual review constitutes one violation:

- Multiple tweets, blog posts, and support articles claiming manual review- Potential exposure: Millions of dollars

Moderate Calculation (Per Creator Misled): If each creator who relied on false statements constitutes a violation:

- Hundreds of thousands of creators filed appeals believing in manual review- Potential exposure: Tens of billions of dollars

Aggressive Calculation (Per Appeal Fraudulently Processed): If each automated appeal that was claimed to be manual constitutes a violation:

- Hundreds of thousands to millions of appeals processed- Potential exposure: $53,088 × [number of fraudulent appeals]- Could exceed $50-100+ billion

Precedent: FTC Enforcement Against Tech Platforms

The FTC has increasingly targeted tech platforms for deceptive practices:

Recent Examples:

- Amazon fined $25 million (2023) for Alexa privacy violations- Fortnite/Epic Games fined $520 million (2022) for deceiving users about privacy and billing- Twitter/X fined $150 million (2022) for misusing user data for targeted advertising- Google fined $170 million (2019) for YouTube COPPA violations

The YouTube AI termination scandal dwarfs many of these cases in scope—affecting millions of creators versus thousands of consumers in typical cases.

The Technical Evidence: How AI Powered the Deception

Gemini AI: The Termination Engine

Multiple reports confirm that YouTube deployed Google’s Gemini AI in the termination and appeals process:

Gemini’s Role:

- Content Analysis: AI scanned videos for policy violations2. Pattern Matching: Identified channels allegedly linked to previously terminated accounts3. Bulk Processing: Enabled termination of millions of channels simultaneously4. Appeals Handling: Generated rejection responses based on termination reasons

The Circumvention Policy AI: YouTube’s updated Circumvention Policy uses AI to detect and auto-terminate channels allegedly linked to banned creators through:

- Face recognition: Identifying creators who appear in videos- Voice identification: Matching voice patterns across channels- Content style analysis: Detecting similar editing styles, topics, and presentation- Network analysis: Linking channels through metadata and behavioral patterns

The problem? These AI systems make mistakes—frequently:

- False positives from face recognition (similar-looking individuals)- Voice matching errors (especially across languages and accents)- Content style false positives (creators in same niches naturally have similar styles)- Guilt by association (collaborators, family members, employees flagged)

Sprinklr: The Automation Platform

Sprinklr’s AI Capabilities in 2025:

According to Sprinklr’s own marketing materials and case studies:

- AI Agents: “Built natively on the Sprinklr platform… to run end-to-end processes and automate complex tasks at scale”2. 99% Bot Handling: Real-world implementations deflect “over 20 million cases a year” with AI handling “99% of inquiries”3. Automated Response Generation: “AI analyzes the ongoing conversation… and automatically surfaces suggested responses”4. 24/7 Self-Service: “AI-powered chatbots and voicebots to handle common customer questions… to give customers 24/7 self-service options”5. Agentic AI: “Sprinklr natively infuses specialized, generative and agentic AI into the platform to effortlessly function alongside your teams”

Translation: Sprinklr is explicitly designed to automate customer service at scale with minimal human involvement—exactly the opposite of what YouTube claimed.

The Metadata Trail

Tech-savvy creators who examined the technical details of their @TeamYouTube interactions found:

- Sprinklr tracking codes in message metadata- Automated routing identifiers showing messages never touched human inboxes- Response timestamps inconsistent with human typing speeds- Template signatures in supposedly personalized messages- Identical phrasing across thousands of appeal rejections

This metadata evidence provides smoking-gun proof that contradicts YouTube’s claims of manual review.

The Business Impact: Destroyed Livelihoods

Economic Devastation for Creators

YouTube terminations don’t just delete videos—they destroy businesses and livelihoods:

Immediate Financial Impact:

- Loss of YouTube Partner Program revenue (ad revenue, memberships, Super Chat)- Frozen AdSense accounts with unpaid earnings trapped- Loss of sponsorship deals tied to channel metrics- Destroyed affiliate marketing income streams

Long-Term Business Damage:

- Years of content creation erased instantly- Audience relationships severed without warning- Brand reputation damaged by termination stigma- Search rankings lost (videos that ranked for key terms disappear)

Quantifying the Damage:

For a mid-sized creator (100,000 subscribers):

- Average annual revenue: $50,000-$150,000- Frozen unpaid earnings: $5,000-$15,000- Lost sponsorship deals: $20,000-$100,000- Total immediate impact: $75,000-$265,000

Multiply this across 5 million terminated channels, and the economic devastation to creators runs into the billions of dollars.

The Chilling Effect on Free Expression

Beyond economics, the AI termination scandal creates a chilling effect:

Creators are self-censoring:

- Avoiding controversial topics (even legitimate educational content)- Hesitant to criticize YouTube or Google- Afraid to collaborate with terminated creators- Reluctant to invest in content creation given termination risk

New creators are deterred:

- Seeing established channels terminated without warning- Understanding appeals are meaningless (AI-rejected in seconds)- Recognizing they have no recourse or due process- Choosing other platforms or abandoning content creation entirely

This fundamentally undermines YouTube’s stated mission to “give everyone a voice.”

The Broader Pattern: YouTube’s History of Deceptive Practices

This Isn’t YouTube’s First Rodeo

The AI termination scandal fits a disturbing pattern:

2019: COPPA Violations

- FTC fined YouTube $170 million for illegally collecting children’s personal data- YouTube claimed it wasn’t a kids’ platform while profiting from kid-targeted content- Settlement required significant policy changes

2020: COVID-19 Misinformation

- YouTube claimed to remove dangerous health misinformation- Investigation found monetized misinformation remained on platform for months- Automated systems flagged legitimate health information while missing dangerous content

2021: Demonetization Without Transparency

- Creators had monetization disabled without clear explanations- Appeals process opaque and inconsistent- YouTube claimed human review but timelines suggested automation

2023: Ad Revenue Sharing Changes

- YouTube changed Shorts revenue sharing with minimal creator consultation- Presented changes as creator-friendly while reducing many creators’ earnings- Lack of transparency about algorithm changes affecting revenue

2024: Shadowbanning Denials

- YouTube repeatedly denied using “shadowbans” to limit content reach- Creator testing demonstrated clear suppression of certain content types- YouTube later admitted to “limited distribution” for borderline content (shadowbanning by another name)

Pattern Recognition: Trust Deficit

Each incident follows a familiar pattern:

- YouTube implements policy or system changes affecting creators2. Creators report problems and demand transparency3. YouTube denies or minimizes the issue4. Evidence mounts forcing partial admissions5. YouTube makes minimal changes while protecting underlying systems6. Repeat

The AI termination scandal represents the most egregious example yet—affecting millions of creators and involving provably false public statements about automation.

What Creators Can Do: Legal and Practical Options

Immediate Steps for Terminated Creators

1. Document Everything

- Screenshot all communications with @TeamYouTube and support- Record timestamps of appeals submitted and rejections received- Save email metadata showing Sprinklr tracking codes- Archive your content if you have local copies- Document financial losses (frozen earnings, lost sponsorships, etc.)

2. File Formal Appeals

Despite the automation, continue filing appeals:

- Use YouTube Studio’s official appeal process- Submit detailed explanations of why your content is legitimate- Request specific policy violations that led to termination- Demand human review (explicitly state this in your appeal)

3. Escalate Through Multiple Channels

- Contact Creator Support directly through YouTube Studio- Tweet at @TeamYouTube (public pressure matters)- Post in Creator Community forums- Reach out to your Partner Manager if you have one

4. Preserve Legal Rights

- Do not accept settlement offers without consulting an attorney- Save all Terms of Service versions you agreed to- Document your content creation history (backups, scripts, production notes)- Identify witnesses who can attest to your content legitimacy

Collective Action Options

Class Action Lawsuit Potential

The AI termination scandal has classic class action elements:

- Common questions of law and fact: Did YouTube make false statements about manual review?- Numerosity: Millions of affected creators- Typicality: Similar experiences across creator base- Adequacy: Representative plaintiffs can be identified

Potential claims:

- Breach of contract (YouTube promised manual review in Terms of Service)- Fraud/misrepresentation (false statements about appeals process)- Unjust enrichment (YouTube profited from creators’ content then terminated without due process)- Violation of state consumer protection laws

FTC Complaint

Individual creators can file complaints with the FTC:

How to file:

- Visit FTC.gov/complaint2. Select “Internet Services, Online Shopping, or Computers”3. Describe YouTube’s false statements about manual review4. Provide evidence (screenshots, timestamps, metadata)5. Explain financial harm suffered

What the FTC considers:

- Number of complaints received- Evidence of widespread harm- Deceptive practice affecting commerce- Company’s history of violations

With hundreds of thousands of potential complaints, the FTC will be forced to investigate.

Alternative Platforms to Consider

Don’t put all your eggs in one basket. Diversify your content:

Video Platforms:

- Vimeo (creator-friendly, no ads on basic plan)- Rumble (growing platform, creator revenue share)- Odysee (decentralized, blockchain-based)- TikTok (short-form, but different audience)- Instagram Reels (Meta ecosystem integration)

Self-Hosted Options:

- Patreon (direct creator-fan relationship)- Substack (newsletter + video capabilities)- WordPress (full control, can embed video)- Ghost (creator-focused CMS)

Decentralized Platforms:

- PeerTube (federated YouTube alternative)- DTube (blockchain-based video platform)- LBRY/Odysee (cryptocurrency-based rewards)

Best practice: Maintain presence on multiple platforms and always keep local backups of your content.

Privacy Implications: What This Means for All YouTube Users

Your Data is Powering the AI Termination Machine

While creators bore the brunt of this scandal, all YouTube users should be concerned about how their data is being used:

AI Training Data:

- Your viewing history trains recommendation algorithms- Your comments train content moderation AI- Your facial appearance (if you appear in videos) feeds face recognition- Your voice (in comments, videos) trains voice identification

The Circumvention Policy AI: YouTube’s face recognition, voice identification, and behavioral analysis systems don’t just affect creators—they’re analyzing all users:

- Face recognition across all videos (viewers and creators)- Behavioral tracking to link accounts and identify “ban evasion”- Network analysis of who watches, comments, and interacts with terminated creators- Guilt by association algorithmic linking

Privacy Risks from Automated Decision-Making

The YouTube AI termination scandal demonstrates the dangers of opaque automated systems:

Lack of Due Process:

- No meaningful human review- No opportunity to confront evidence- No explanation of specific violations- No effective appeals process

Error Amplification:

- AI mistakes multiply at scale (5 million terminations)- False positives destroy livelihoods- No mechanism to correct errors quickly- Automated systems reinforce initial errors

Surveillance Creep:

- More invasive AI monitoring to enforce policies- Cross-platform tracking (YouTube, Gmail, Google accounts)- Behavioral profiling without consent- Data sharing with AI systems without transparency

Protecting Your Privacy on YouTube

Given YouTube’s demonstrated willingness to use AI systems without transparency:

Account Security:

- Review your YouTube privacy settings- Pause watch history to limit recommendation training data- Use Incognito Mode for sensitive topics- Separate personal and creator accounts

Data Minimization:

- Don’t link unnecessary Google services to YouTube- Limit personal information in your profile- Avoid face/voice in videos if privacy-concerned- Use VPN to obscure location data

Content Strategy:

- Keep local backups of all content you create- Document your creative process to prove originality- Avoid controversial topics if your channel is your livelihood (sad but practical)- Diversify platforms to reduce dependency on YouTube

For a comprehensive guide to protecting your privacy on social media platforms including YouTube, see our Complete Guide to Social Media Privacy in 2025.

What Happens Next: Potential Outcomes

Scenario 1: FTC Investigation and Enforcement

Likelihood: Moderate to High

Given the scale of the deception and the clear evidence:

Timeline:

- Q1 2025: Complaint gathering and preliminary investigation- Q2 2025: Formal investigation launched, document requests to YouTube/Google- Q3-Q4 2025: Evidence review, depositions, negotiation- 2026: Settlement or enforcement action

Potential outcomes:

- Civil penalties: Could reach billions given per-violation calculation- Injunctive relief: Requirements to implement actual human review- Transparency mandates: Forced disclosure of AI usage in moderation- Monitoring regime: FTC oversight of YouTube’s appeals process for years

Scenario 2: Class Action Litigation

Likelihood: High

Law firms are almost certainly evaluating class action potential:

Legal theories:

- Breach of contract (Terms of Service promised fair process)- Consumer fraud (false statements about manual review)- Unjust enrichment (YouTube profited from terminated creators’ content)

Potential recovery:

- Economic damages: Lost earnings, frozen payments, business losses- Punitive damages: To punish and deter deceptive conduct- Injunctive relief: Court-ordered changes to appeals process

Timeline:

- Q4 2024 - Q1 2025: Class certification motions- 2025-2026: Discovery, depositions, expert witnesses- 2026-2027: Settlement negotiations or trial- 2027+: Appeals, fund distribution to class members

Scenario 3: Congressional Investigation

Likelihood: Moderate

This scandal touches on multiple Congressional concerns:

Relevant Committees:

- Senate Commerce Committee: Platform accountability- House Energy and Commerce: Consumer protection- Senate Judiciary: Antitrust and competition (Google’s market power)

Potential actions:

- Hearings: YouTube/Google executives testifying under oath- Legislation: Requirements for human review in content moderation- Regulatory pressure: Coordination with FTC for enforcement

Scenario 4: YouTube’s Preemptive Response

Likelihood: Very High (Already Happening)

To minimize legal exposure, YouTube will likely:

Short-term changes:

- ✅ Shift to actual human responders (already started)- ✅ Overturn some high-profile terminations (happening selectively)- Issue clarifications about Sprinklr’s “limited” role- Blame “miscommunication” rather than admit deception

Long-term changes:

- Modify Terms of Service to explicitly allow AI in appeals- Add disclaimers about automated systems- Implement “hybrid” review (AI screens, humans rubber-stamp)- Increase review times to create appearance of human involvement

What they won’t do:

- Admit to deliberately deceptive statements- Restore all 5 million terminated channels- Compensate creators for lost earnings- Implement meaningful due process protections

Scenario 5: The Status Quo

Likelihood: Moderate

The pessimistic outcome where:

- FTC investigation gets bogged down or results in minimal fine- Class actions settle for pennies on the dollar with no admission of wrongdoing- YouTube makes cosmetic changes but maintains AI-driven termination/appeals- Creators remain at mercy of opaque automated systems- The cycle repeats with the next policy update

Why this could happen:

- Google/YouTube’s enormous legal and lobbying resources- Regulatory capture and tech industry influence- Difficulty proving “knowing” deception versus “miscommunication”- Creator dependency on platform (can’t afford to leave)

The Bigger Picture: AI Accountability in Tech Platforms

YouTube is the Canary in the Coal Mine

This scandal represents something larger than one platform’s mistakes—it’s a preview of the AI accountability crisis facing all tech platforms:

The Core Problem:

Tech platforms increasingly use AI to make decisions affecting millions of people:

- Content moderation: What speech is allowed- Account termination: Who can use the platform- Monetization: Who can earn money- Reach and visibility: What content gets seen

But they refuse to be transparent about:

- How the AI systems work- What data trains them- How decisions are made- How errors are corrected- Whether humans are actually involved

The YouTube scandal proves:

- Platforms will lie about AI usage when automation is controversial2. “Human review” claims are often false (automated systems rubber-stamped as human decisions)3. Scale incentivizes deception (manual review of millions of cases is impossible, so platforms automate and lie)4. Lack of transparency enables abuse (without metadata analysis, creators couldn’t prove Sprinklr automation)5. Self-regulation doesn’t work (platforms only change behavior when facing legal/regulatory pressure)

What Needs to Change: Policy Recommendations

For Regulators (FTC, DOJ, International Authorities):

- Mandate AI disclosure: Platforms must clearly state when AI makes decisions2. Prohibit false claims about human review: Make it illegal to claim human involvement when using automation3. Require transparency reports: Platforms must publish data on:

- Number of terminations/suspensions (human vs. AI)- Appeal outcomes (human vs. AI review)- Error rates and overturn statistics- AI system accuracy metrics4. Establish due process minimums:

- Right to know specific violations- Right to meaningful human review- Right to appeal with actual human consideration- Reasonable timeframes for review5. Penalties with teeth: $53,088 per violation should be the floor, not the ceiling

For Platforms (YouTube, Google, and Others):

- Be honest about AI usage: Tell users when AI makes decisions2. Provide actual human review: If you promise it, deliver it3. Explain decisions: Tell users specifically what they did wrong4. Create real appeals processes: Not AI rubber-stamps5. Compensate for errors: When false terminations destroy livelihoods, make it right6. Independent oversight: Allow third-party audits of AI systems

For Creators and Users:

- Demand transparency: Don’t accept vague explanations2. Document everything: Screenshot, timestamp, save metadata3. Collective action: Individual complaints are ignored; class actions get attention4. Diversify platforms: Don’t depend on one company for your livelihood5. Support regulation: Advocate for laws that protect your rights

🎧 Related Podcast Episode

Conclusion: A Reckoning is Coming

YouTube’s AI termination scandal represents a watershed moment in the tech industry’s relationship with creators, users, and regulators. The platform’s demonstrably false claims about manual review—contradicted by overwhelming evidence of Sprinklr AI automation and sub-60-second appeal rejections—constitute exactly the kind of deceptive practice that FTC Act Section 5 was designed to prevent.

The Bottom Line

- 5 million creators had channels terminated by AI systems- YouTube explicitly claimed human review of appeals- Evidence proves appeals were AI-automated (Sprinklr platform, sub-minute rejections, metadata trails)- This constitutes deceptive practice under FTC Act Section 5- Penalties could reach $53,088 per violation—potentially billions in total fines- Creators suffered massive economic harm—lost earnings, destroyed businesses, frozen revenue

Why This Matters

This isn’t just about YouTube. It’s about whether tech platforms can lie to users about how their automated systems work. It’s about whether “human review” means anything, or whether it’s just a comforting fiction while AI systems make life-altering decisions in milliseconds.

It’s about whether creators and users have any rights at all when platforms wield AI systems as judge, jury, and executioner—with no transparency, no due process, and no accountability.

The Path Forward

The only way this changes is through pressure:

Legal pressure from class actions and FTC enforcement Economic pressure from creators diversifying to other platforms Political pressure from Congressional investigations and new legislation Social pressure from continued public attention to the deception

YouTube got caught lying. Whether they face meaningful consequences for that lie will determine not just the future of YouTube, but the future of AI accountability across the entire tech industry.

The creators who lost their channels, their livelihoods, and their creative work deserve justice. The millions of users whose data trains these AI systems deserve transparency. And all of us who depend on digital platforms deserve honesty about when machines, not humans, are making the decisions that shape our digital lives.

The ball is now in the FTC’s court. With clear evidence of deceptive practices affecting millions of people, the question isn’t whether YouTube violated the law—it’s whether regulators will enforce it.

Key Takeaways

- ✅ YouTube falsely terminated 5 million+ channels using AI systems including Gemini- ✅ Appeals rejected in under 60 seconds—impossible for human review- ✅ YouTube claimed “manual review” while using Sprinklr AI automation- ✅ Metadata proves AI automation—Sprinklr tracking codes in communications- ✅ FTC Act Section 5 violations: Deceptive practices regarding AI usage- ✅ Penalties up to $53,088 per violation as of 2025 (updated from $51,744)- ✅ Potential fines in the billions depending on how violations are counted- ✅ Creators suffered massive losses—frozen earnings, destroyed businesses- ✅ YouTube shifted to human responses after public exposure (legal damage control)- ✅ Class action lawsuits likely—breach of contract, fraud, consumer protection- ✅ Sprinklr is 99% bot-powered—explicitly designed for AI automation at scale- ✅ Pattern of deception—YouTube’s history of false claims (COPPA, demonetization, shadowbanning)

Protect your privacy and creator rights. Diversify your platform presence, document all interactions with platforms, and demand transparency about AI usage.

Related Reading:

- YouTube Privacy Configuration: A 2025 Technical Guide- Complete Guide to Social Media Privacy in 2025- Content Creator Privacy Guide: Protect Your Digital Life While Building Your Brand

Have you been affected by YouTube’s AI terminations? Share your story in the comments below. Collective documentation of these cases strengthens potential legal action.

Disclaimer: This article is for informational purposes only and does not constitute legal advice. Creators affected by channel terminations should consult with qualified attorneys regarding their specific situations.